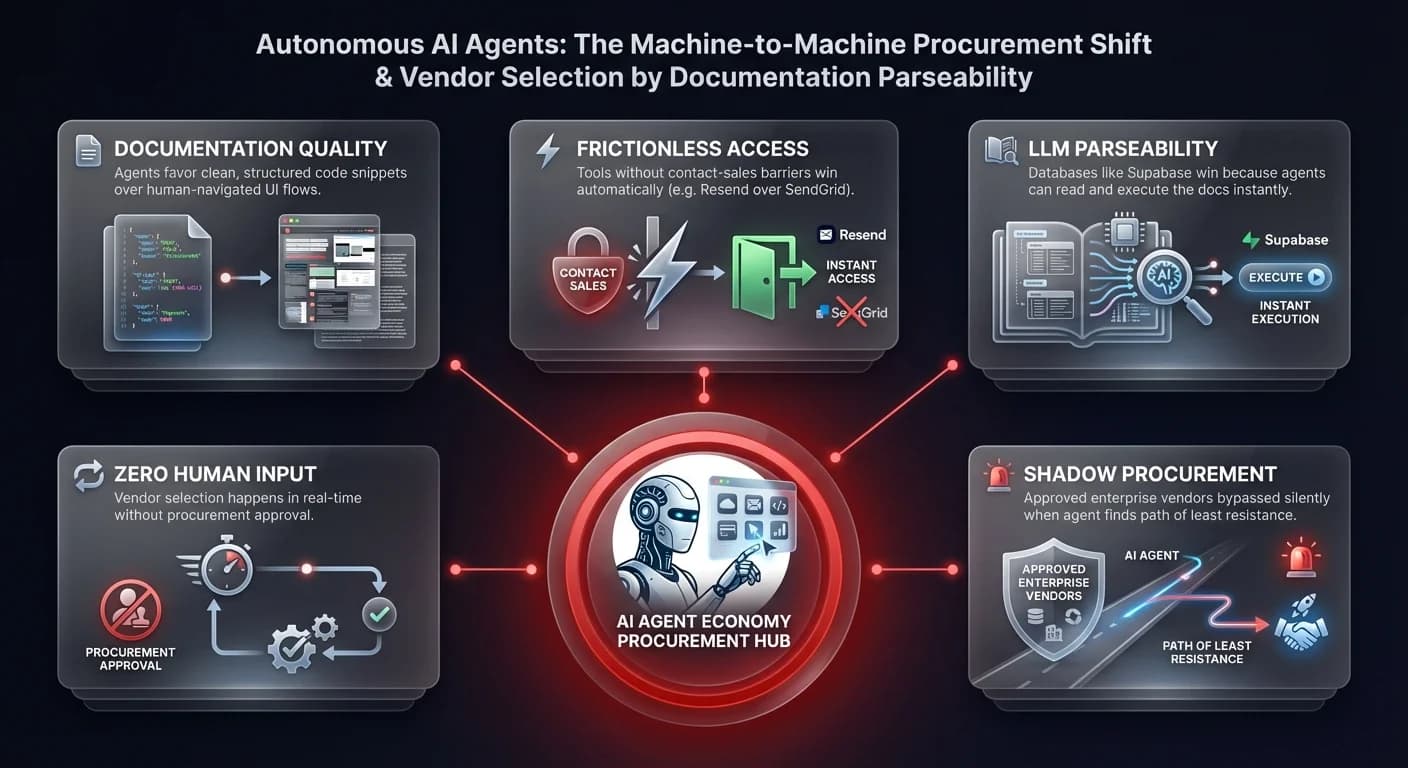

The agent economy is the emerging paradigm in which autonomous AI agents — not humans — select, integrate, and manage software tools as part of executing business workflows. Shadow AI risk management has become the defining operational challenge: agents now choose databases, APIs, and vendors based on documentation clarity and ease of access, often bypassing approved procurement controls in real-time. For operations leaders, this is the next evolution of Shadow IT — operating at a speed that manual governance cannot match.

For decades, software procurement was a human-centric process: engineers read documentation, managers approved budgets, and procurement teams vetted security compliance. That era is ending. This shift presents a paradox for operations leaders. The efficiency gains are undeniable, yet the risks of "shadow procurement" and ungoverned technical debt are escalating. When an AI agent decides which database to spin up or which email API to integrate, it uses logic that differs vastly from human reasoning. Understanding this new dynamic is critical for maintaining operational sovereignty.

This is a phenomenon closely related to the broader governance crisis around desktop AI agents — where local, autonomous execution brings speed but removes organizational visibility.

The rise of machine-to-machine procurement

Recent observations from the startup ecosystem, particularly within Y Combinator circles, highlight a rapid behavioral shift. Developers and non-technical founders alike are deploying agent swarms — often using tools like Claude Code or OpenClaw — to automate complex development tasks. These agents are not merely writing code; they are making architectural decisions.

The criteria agents use to select vendors are distinct from human criteria. Humans might prioritize brand reputation, sales relationships, or pricing tiers. Agents prioritize parseability. They gravitate toward tools where the documentation is structured in a way that Large Language Models (LLMs) can easily ingest and execute.

The documentation advantage

A prime example of this is the shift in email infrastructure. Legacy providers like SendGrid, despite their market dominance, often have documentation designed for human navigation — sometimes requiring navigation through support portals or complex UI flows. In contrast, newer entrants like Resend are capturing market share because their documentation provides clean, structured code snippets that agents can copy, paste, and execute immediately.

For an operations leader, this means your organization's tech stack might drift away from approved enterprise vendors toward whatever tools your internal agents find easiest to access — a form of shadow procurement that IT service management automation must address proactively. If your approved vendor has "contact sales" friction or complex authentication barriers, your autonomous agents will bypass them in favor of frictionless, developer-centric alternatives like Supabase for databases or Resend for communications. This creates a fragmented infrastructure where critical data flows through unvetted channels simply because the agent found the path of least resistance.

Shadow AI risk management: the governance imperative

The industry is currently witnessing a phenomenon described by some as "cyber psychosis" — a state where founders and operators, intoxicated by the speed of AI, run multiple simultaneous agent workflows late into the night. We are seeing scenarios where a single operator might have four or five distinct "conductor" agents running in parallel, building software, researching markets, or processing data streams.

This behavior represents the next evolution of Shadow IT, but at a scale and speed that manual governance cannot match. In traditional Shadow IT, an employee might sign up for a SaaS tool on a corporate card. In Shadow AI, an agent might spin up entirely new infrastructure, deploy code, and transmit proprietary data to third-party APIs within minutes.

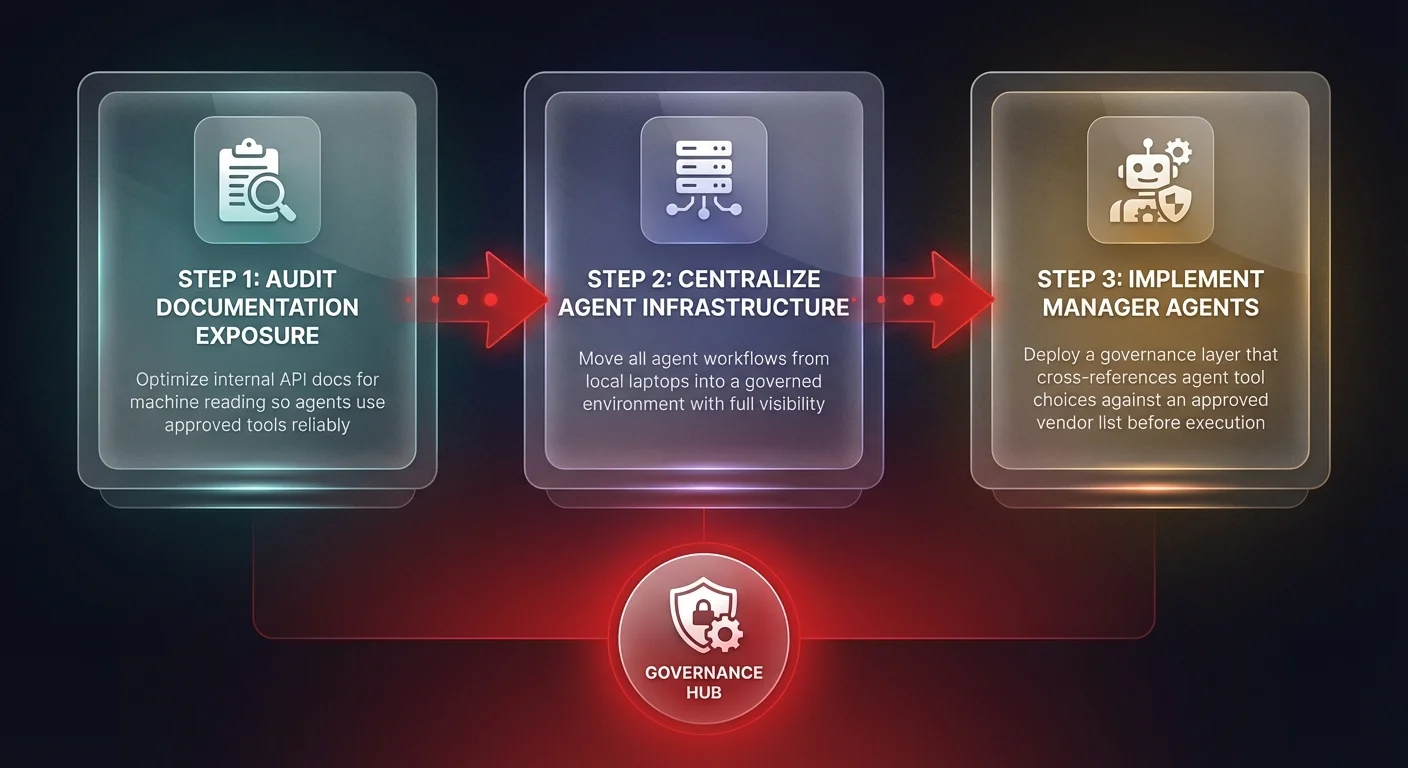

The risk is not just financial; it is operational. These "cyber psychotic" bursts of productivity often occur on local machines or personal accounts, completely outside the organization's governed environment. Data sovereignty is lost the moment a high-level executive runs a sensitive workflow through an ungoverned agent on a personal laptop at 3:00 AM. The challenge for the enterprise is to bring this activity in-house — to provide a sovereign infrastructure where these agents can run safely, visibly, and within policy.

For a deeper analysis of these emerging shadow AI agents and their governance risks, the pattern is consistent: speed without structure creates compounding liability.

Swarm intelligence vs. the god model

The prevailing theory of a single "God Intelligence" model is giving way to swarm intelligence. Much like biological systems, the most effective AI implementations are proving to be collections of specialized, lower-cost agents collaborating to solve problems.

We are seeing the emergence of "agent-only" communities where swarms of agents interact, trade information, and simulate complex social dynamics without human intervention. This mirrors how successful operational workflows are built. Rather than asking one expensive model to research, write, and code, effective systems use a swarm: one agent researches, another drafts, a third reviews, and a fourth executes.

The orchestration gap

However, unmanaged swarms create inefficiency. In one documented case, an agent tasked with video transcription defaulted to using Whisper V1 — an older, slower model — simply because it was the first viable option it found. It took an hour to process an hour of video. A human operator later realized that using Groq's infrastructure would have been 200 times faster and significantly cheaper.

This highlights a critical operational necessity: orchestration. Without a "manager" agent or a governed framework to enforce best practices, autonomous agents will optimize for completion rather than performance or cost. They might choose a deprecated API or an expensive processing route because they lack the strategic context a governed system provides. Operations leaders must implement infrastructure that forces agents to utilize approved, high-performance tools rather than defaulting to whatever is easiest to find.

These orchestration challenges are a core dimension of the broader governance challenges facing agentic AI systems across the enterprise.