Claude Code n8n workflows are self-building automation systems where an AI coding agent architects, tests, and deploys entire n8n workflows from natural language descriptions — replacing the manual process of wiring together low-code nodes. At Ability.ai, we have deployed this approach for mid-market clients and consistently reduced workflow build time from hours to minutes, though the need for structured governance has never been higher.

Specifically, the integration of Claude Code — an autonomous coding agent that lives in the terminal — with n8n's workflow automation platform demonstrates a new "self-building" architecture. For operations leaders, this represents a massive acceleration in time-to-value, but it also introduces new requirements for technical oversight and strategic planning. Here is what the latest research tells us about this emerging capability.

| Capability | Manual n8n Building | Claude Code + n8n | |---|---|---| | Build time per workflow | 4 - 12 hours | 15 - 45 minutes | | Node wiring | Manual drag-and-drop | API-driven, automated | | Error handling | Developer writes manually | Agent tests, self-corrects | | Credential setup | Repeated per workflow | Template inheritance | | PRD / governance layer | Optional, often skipped | Enforced before build starts | | Who can build | n8n-trained developers | Operations leaders with AI oversight | | Ownership | Your n8n instance | Your n8n instance |

AI workflow automation tools: from low-code to self-building agents

There has long been a debate in the automation community: should we use code (Python/Node.js) for flexibility, or low-code tools (n8n/Make) for observability? The emergence of Claude Code renders this binary choice obsolete. The most effective strategy is now using coding agents to build low-code infrastructure.

Claude Code excels at building applications, custom software, and iterating on logic. However, for ongoing business operations, n8n remains superior for deploying deterministic systems. It allows non-developers to visualize the logic, debug errors, and maintain the system over time. The breakthrough is that we no longer need to manually build the n8n workflow. We can treat Claude Code as a technical architect that constructs the n8n system for us. This represents a fundamental evolution of how AI coders can function as a coordinated team.

This creates a powerful symbiotic relationship: the AI agent handles the technical heavy lifting of API connections and JavaScript transformation, while the low-code platform provides the governance and stability required for enterprise operations. Companies leveraging this architecture for software development acceleration are seeing dramatic reductions in time-to-deployment.

The architecture of autonomy: skills and PRDs

The most critical insight from this research is that you cannot simply tell an AI to "build a lead gen bot." Without a structured plan, the agent will hallucinate ineffective workflows or get stuck in loop errors. Successful implementation requires a "Skill" based architecture that mirrors human engineering processes.

Phase 1: the PRD generator

Before a single line of code is written or a node is dragged, the system must generate a Product Requirement Document (PRD). In this workflow, the user feeds a raw transcript — such as a recording of a discovery call — into the agent. A specialized "PRD Generator Skill" analyzes the transcript and interviews the user to define constraints:

- Source verification: Where should data come from? (e.g., Google Maps)

- Enrichment logic: What specific data points are needed? (e.g., emails via FullEnrich)

- Error handling: Where should alerts go if the automation fails?

- Success criteria: How are leads qualified and scored?

This step enforces governance. It ensures the agent understands the business logic before it attempts technical execution.

Phase 2: the n8n builder skill

Once the PRD is approved, a second skill takes over. This is not a text-generation task; it is an active engineering task. The agent connects directly to the n8n instance via API. It doesn't just paste a massive JSON file; it builds the workflow node by node.

Real-time testing and debugging

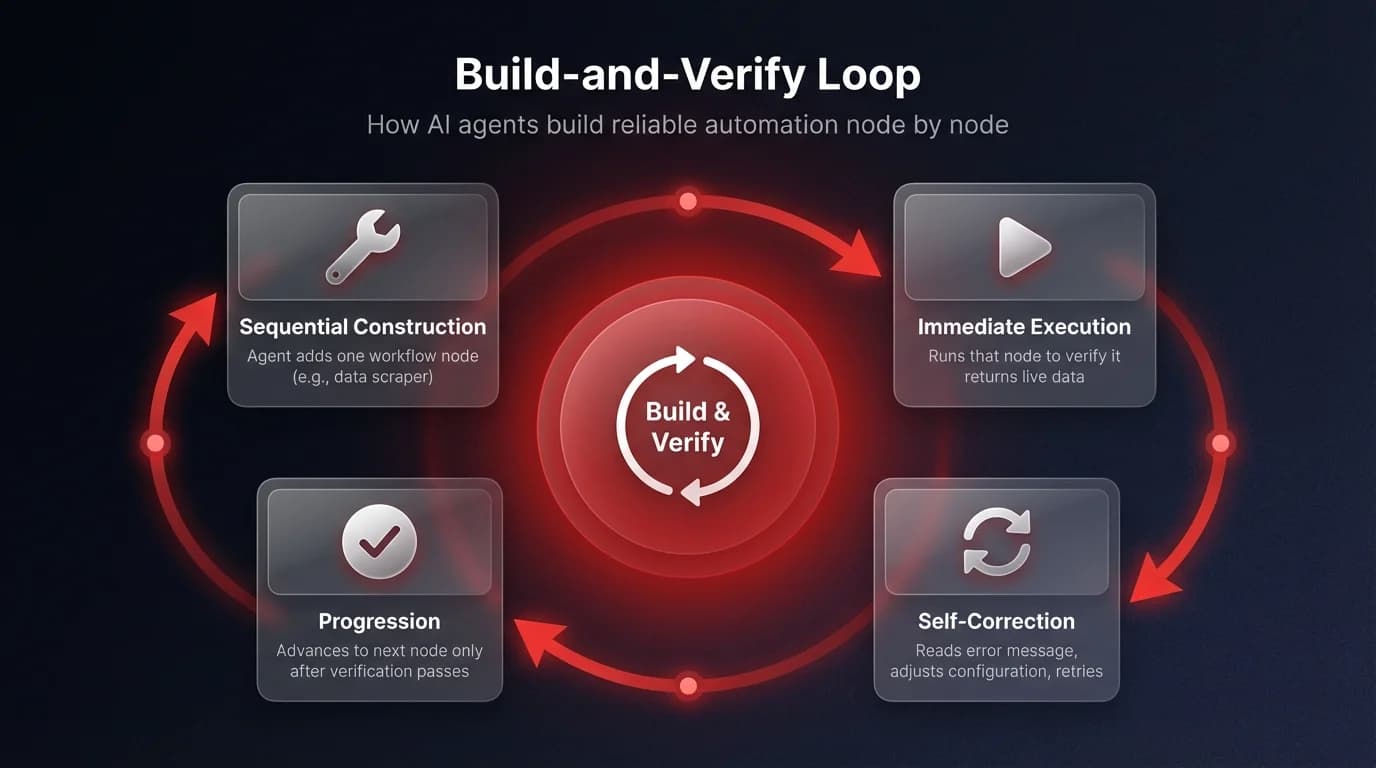

What separates this approach from standard LLM code generation is the agent's ability to execute and verify its work in real-time. The research highlights a rigorous testing methodology:

- Sequential construction: The agent adds a node (e.g., a Google Maps scraper).

- Immediate execution: It runs that specific node to see if it returns data.

- Self-correction: If the node fails, the agent reads the error message, adjusts the configuration, and tries again.

- Progression: Only after a node is verified does it move to the next step (e.g., adding a Loop node).

This mimics how a senior engineer works. By testing incrementally, the agent prevents the "cascade of errors" common in AI-generated code. For example, in a test case involving a medical equipment supplier, the agent successfully navigated from scraping leads to enriching them with email addresses, creating a loop structure that processed items one by one rather than crashing on bulk data. This same incremental build-and-verify pattern powered the AI content system we built for a SaaS client, where autonomous agents handled the full production pipeline.

The ability to run parallel AI workflows while maintaining sequential verification is what makes this architecture resilient enough for production use.