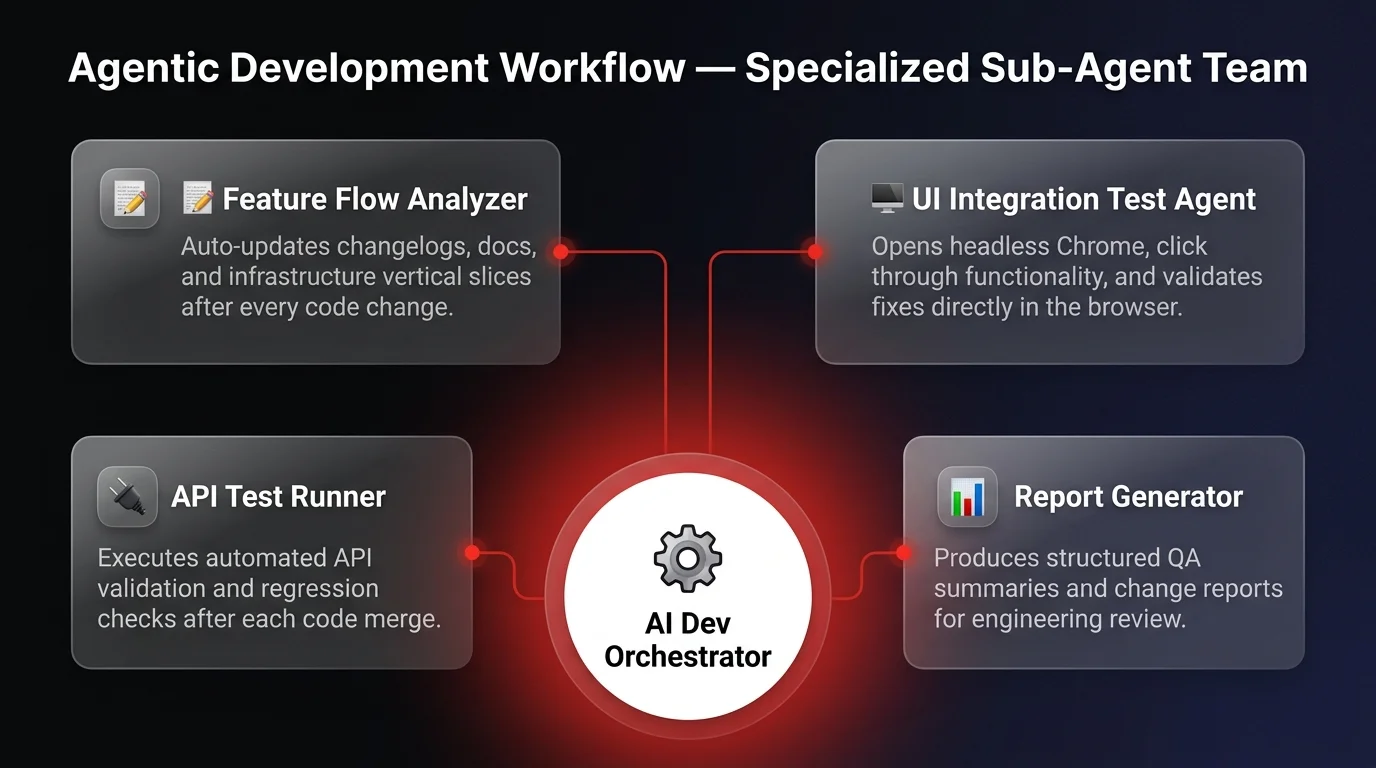

An agentic development workflow is a software engineering system where specialized AI sub-agents autonomously handle testing, documentation, changelogs, and quality assurance alongside code generation. Most developers still use AI like a smarter Stack Overflow — copy-pasting code and manually updating docs. But we've moved beyond coding assistants to fully agentic development systems. It's not about typing faster; it's about orchestrating a digital team where specialized sub-agents handle the grunt work so you can focus entirely on architecture and problem-solving.

The power of agentic workflows

Let me show you what this looks like in practice. Recently, I had my AI assistant fix a simple dark mode bug. In a traditional workflow, the AI spits out the code, you paste it in, and then you have to remember to update the changelog, tweak the documentation, and check for regressions. That's high-friction, and frankly, it's low-leverage work.

But in my system, I've orchestrated a different approach. As soon as the code was fixed, a specialized sub-agent - the Feature Flow Analyzer - immediately kicked in. It didn't wait for a prompt. It reviewed the code changes against the infrastructure vertical slices, automatically added the entry to the changelog, and updated the specific feature flow documentation.

This is the power of an agentic workflow. It transforms the AI from a passive tool into an active partner. The Feature Flow Analyzer isn't just generating text; it's understanding the context of the project structure and taking ownership of the maintenance tasks that most developers hate. This allows you to maintain high-signal focus on problem-solving while the agents handle the administrative overhead — the same architecture behind our software development automation engagements. By assigning specific roles to sub-agents, you prevent context pollution and ensure each task is handled with precision.

Quality assurance as specialization

But it doesn't stop at documentation. To truly amplify your development velocity, you need to think about quality assurance as well. In a real engineering team, you don't just have coders - you have QA specialists. Your AI architecture should look the same.

In our stack, we don't just hope the code works. We have a dedicated agent for UI integration tests. This isn't a text generator; it's an agent capable of opening a headless Chrome instance, navigating to the local application, and literally clicking through the functionality to validate the fix. It mimics a human QA tester, verifying that the dark mode toggle actually works in the browser, not just in the code syntax.

We also deploy API test runners and report generators as separate, specialized entities. You wouldn't expect your lead architect to spend half their day manually clicking buttons or writing changelogs. So why do you expect your primary LLM to do it all in one context window?

The reality is that effective AI implementation requires specialization. Instead of one massive prompt trying to do everything, you orchestrate a system of autonomous sub-agents: an analyst, a tester, a documenter. This is how you achieve radical efficiency and ownership over your stack.

Orchestrating your digital workforce

This level of automation isn't science fiction - it's available now if you know how to architect it. At Ability.ai, we help businesses move beyond simple chatbots to build robust, multi-agent systems that solve real engineering challenges. Let's stop playing with toys and orchestrate your new digital workforce.