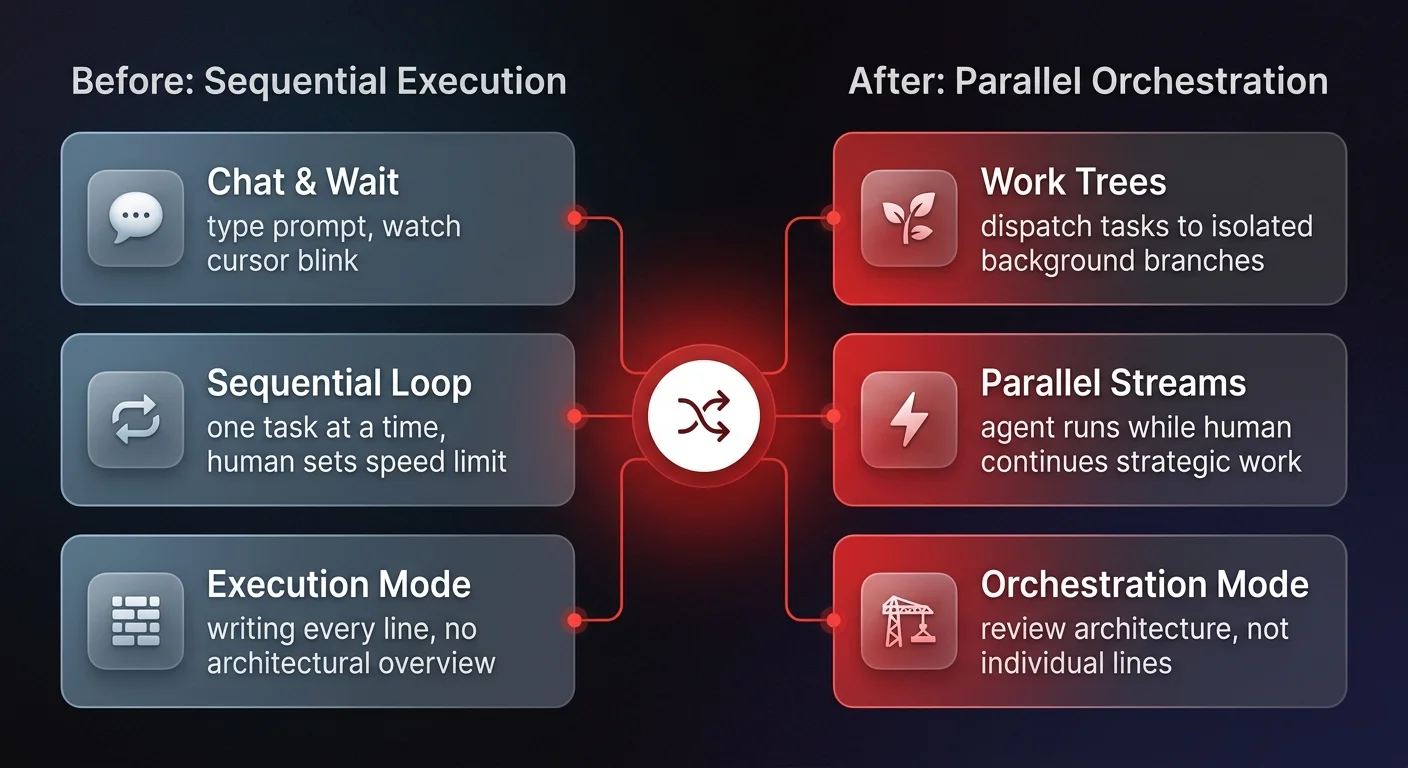

Parallel AI workflows replace the sequential "chat-and-wait" model by dispatching tasks to autonomous agents running in the background while humans continue working. Using work trees and asynchronous delegation, operations leaders shift from execution to orchestration — reviewing agent-completed drafts rather than watching a cursor blink.

Parallel AI workflows are rapidly emerging as the dividing line between high-performing technical teams and those stuck in the "chat-and-wait" cycle. For the past few years, the dominant interaction model with artificial intelligence has been linear: a user types a prompt, waits for the cursor to blink out a response, reads it, and then iterates. While useful for ad-hoc tasks, this sequential process creates a bottleneck where the human sets the speed limit for the machine.

New research into developer workflows using tools like Codex reveals a fundamental shift. By utilizing "work trees" - a method of branching tasks into parallel streams - operators can delegate complex background processes to AI agents while continuing their own manual work simultaneously. This isn't just a productivity hack for software engineers; it represents a critical operational model for COOs and business leaders. The future of enterprise efficiency lies not in faster chatbots, but in the ability to orchestrate multiple, asynchronous agentic workflows without losing operational control.

The death of the sequential waiting game

To understand the magnitude of this shift, we must look at the limitations of standard AI interactions. In a typical environment, such as using a VS Code extension or a standard web-based LLM, the user is often frozen in place. As the researcher noted regarding their previous workflow, they would "kind of be in this place where I want to let Codex do its thing. I don't want to keep working." This pause - the waiting game - kills momentum.

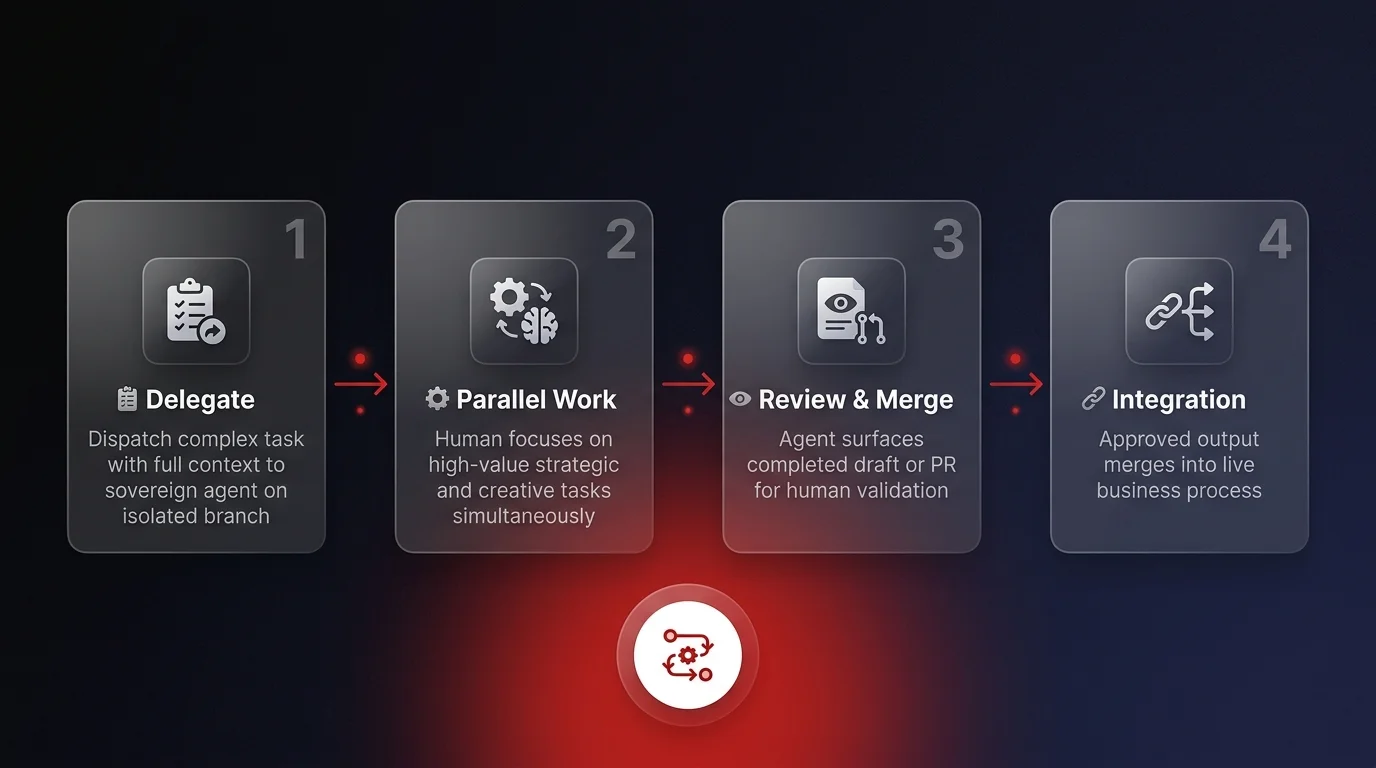

The breakthrough illustrated in recent workflow analyses involves the concept of "work trees." In this model, a user can identify a task - for example, updating sidebar pinned tasks to allow for drag-and-drop reordering - and kick it off on a separate "master branch." Crucially, the user does not watch the AI work. The task is "completely managed by the app," allowing the user to immediately return to a local tree to focus on a completely different objective, such as fixing a "create branch" button logic error.

For business operations, this distinguishes true agentic automation from mere assistance. If your Operations Manager has to watch the AI generate a report to ensure it's correct, they haven't saved time; they've simply changed tasks from "writing" to "monitoring." True parallel execution allows the human to focus on high-value strategy while the agent executes robust tasks in the background, only surfacing when the work is ready for review. This is the foundation of operations automation at scale.

Parallel AI workflows: shifting from individual lines to architecture

The most profound insight from these parallel workflows is the necessary change in the operator's mindset. When you stop writing every line of code - or in a business context, every line of a sales email or contract - your perspective elevates.

The researcher described this transition explicitly: "Instead of focusing on all the individual lines, you'll look at the overall architecture of the code." This is the essence of the shift from execution to orchestration. When the AI is handling the implementation of a drag-and-drop feature based on your instructions, your role shifts to verifying that the output aligns with the system's broader goals.

In the observed workflow, the user tasked the agent with a complex update. While the agent worked, the user noticed a bug in their manual work where a branch was being created twice. They were able to debug their local environment while the agent independently built a pull request (PR) for the background task. This ability to maintain high-level architectural oversight while agents handle the "grunt work" is the target state for modern operations teams.

The role of multimodal context

This architectural oversight is empowered by multimodal capabilities. In the analyzed workflow, the agent didn't just take text instructions; it ingested Figma designs to generate a "nice big PR" that matched the visual requirements.

For operational leaders, this validates the concept that agents can handle unstructured data - distinct from simple automations. An agent that can look at a design file (or a PDF invoice, or a messy spreadsheet) and execute a complex task without constant hand-holding is the prerequisite for parallel workflows. It allows the leader to provide the "blueprint" and trust the agent to build the structure.

The context switching challenge

While parallel AI workflows offer massive efficiency gains, they introduce a new cognitive load: context switching. The researcher admitted that "getting good at context switching in this form" is challenging. It is "pretty tough to completely switch what you're working on," and success depends on finding "good stopping points."

This is a critical warning for organizations deploying agentic systems. If you simply give employees access to ten parallel agents without a governance framework or a structured workflow, you risk cognitive burnout. The human brain is not designed to multitask effectively; it is designed to focus.

The "work tree" model solves this by creating clear boundaries. The background task is isolated on a server or a separate branch; it doesn't pollute the user's active workspace until it is finished. This separation of concerns is vital. In a business context, this means your AI agents should run in a governed, observable environment - distinct from the employee's immediate desktop view - delivering results only when a "stopping point" or review cycle is reached.