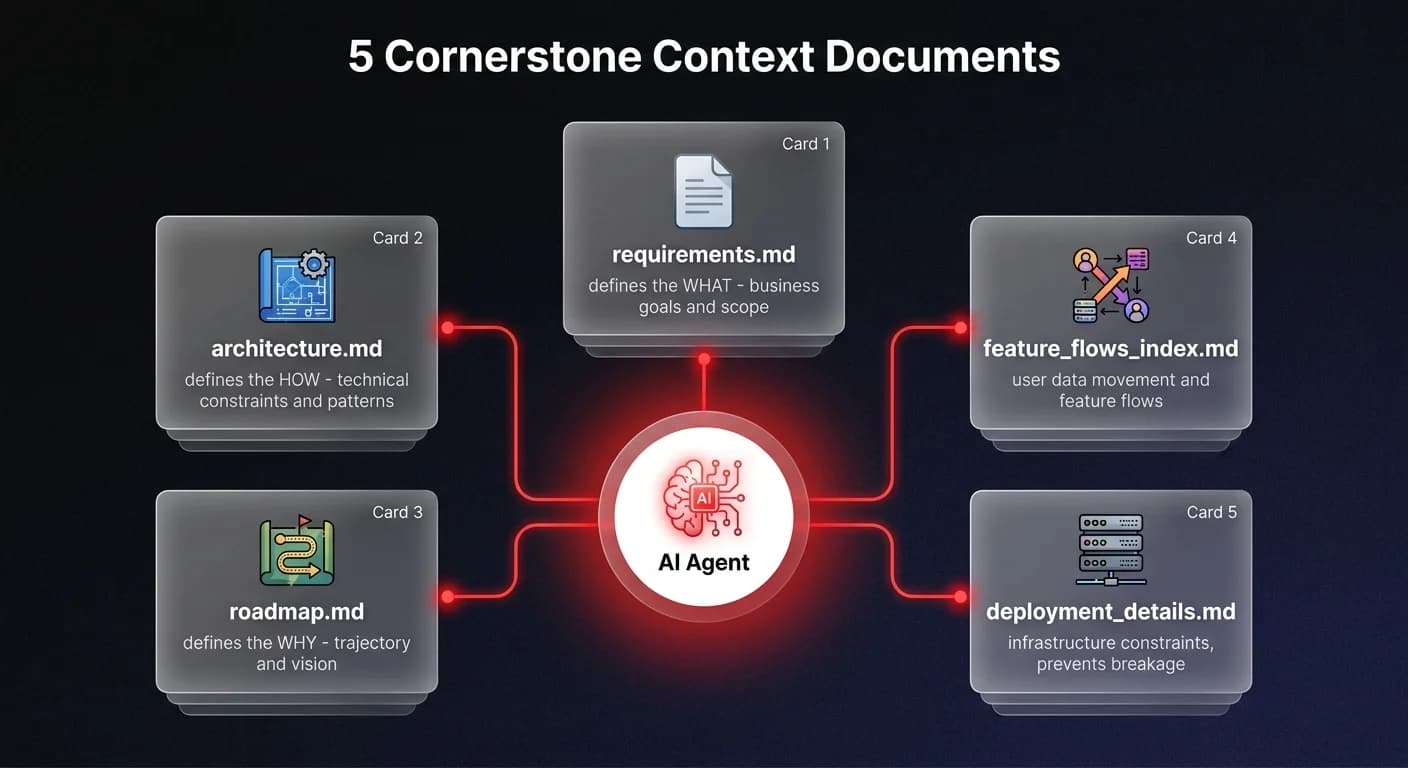

Structuring context for AI agents means providing comprehensive documentation — requirements, architecture decisions, feature flows, and deployment details — before the agent writes a single line of code. Without structured context, even the best AI coding assistants hallucinate solutions that break existing architecture. A well-designed documentation system — as few as five cornerstone files — transforms an AI from a code generator into a localized expert that respects your codebase boundaries and amplifies your output.

Context is king

Here's what I mean when I say context is king. An AI agent doesn't have the implicit knowledge that lives in your head. It doesn't know why you chose that specific architecture or what the business goals are unless you explicitly tell it. Without that context, it's just guessing. And in a complex project, guessing leads to hallucinations and broken builds.

To fix this, I orchestrate my projects around a set of cornerstone documents. I created a specific command for my agents - simply called 'read docs' - that forces them to ingest the critical context before writing a single line of code.

It starts with the basics: 'requirements.md' defines the 'what' - what are we actually building? Then comes 'architecture.md', which defines the 'how' - the technical constraints and patterns we've agreed upon. These two files alone solve 80% of the drift where AI starts inventing libraries or patterns that don't exist in your stack.

But we go deeper. My agents also read 'roadmap.md' to understand the 'why' and the trajectory of the project. They look at 'feature_flows_index.md' to see how user data should move, and 'deployment_details.md' so they don't suggest infrastructure changes that break production. This isn't just documentation; it's a guardrail system. When the agent reads these, it gains high-signal awareness of the entire project scope. It stops being a code generator and starts acting like a localized expert on your specific repository.

From strategy to execution

I used this exact process for my 'Trinity' project, a complex system with moving parts that would normally be a nightmare to maintain with AI assistance. Before implementing this strategy, the AI would frequently lose the plot. It would refactor code that shouldn't be touched or implement features that contradicted the core architecture.

Now, the workflow is radical in its simplicity. Before a task starts, the agent ingests the cornerstone files. It understands the testing protocols from 'testing_guide.md' and checks the 'changelog.md' to see recent context. This multi-layered approach ensures the AI respects the existing codebase.

If you want to replicate this, start by creating your own 'requirements.md' and 'architecture.md' today. Don't make them 50-page PDFs. Keep them concise, high-signal, and machine-readable. The goal isn't to write a novel; it's to provide a map.

By doing this, you're not just getting code faster. You are orchestrating a system where the AI understands the boundaries. You're enabling it to handle complexity that would normally require a senior engineer's oversight. The result? You can keep building and iterating on massive projects without the constant fear of the AI painting itself into a corner. That is how you truly own the AI development stack.

Building reliable AI systems

Building reliable AI systems requires more than just good prompting - it requires a fundamental shift in how we structure technical knowledge. At Ability.ai, we build these architectural principles directly into our agentic workflows. If you're ready to move beyond simple chatbots and orchestrate real business automation, let's talk about how to implement this in your organization.