AGI will not emerge from a single massive model — it will be an orchestrated ecosystem of specialized agents working together through shared memory and coordination layers. The bottleneck to general artificial intelligence is not raw capability; today's LLMs are powerful enough. The missing ingredient is architecture: a metacognitive coordination layer that binds specialized agents into a coherent, goal-directed system — much like the human brain is composed of distinct specialized regions, not one general-purpose module.

My thesis is radical but simple. AGI isn't going to be one single, massive model. It will be an orchestrated ecosystem of specialized agents. We have enough raw intelligence right now. The bottleneck isn't capability anymore - it's architecture. The game has changed, and it's time to stop waiting and start building.

Let's break this down

Let's break this down. The dominant narrative in Silicon Valley relies heavily on scaling laws - the idea that if we just make the models bigger and feed them more data, consciousness or general intelligence will magically emerge. I don't buy it.

Think about the human brain. It isn't one general-purpose module that does everything. It's a collection of highly specialized systems - vision, language, motor control, planning - all working in coordinated harmony. That is exactly how we need to think about Artificial General Intelligence.

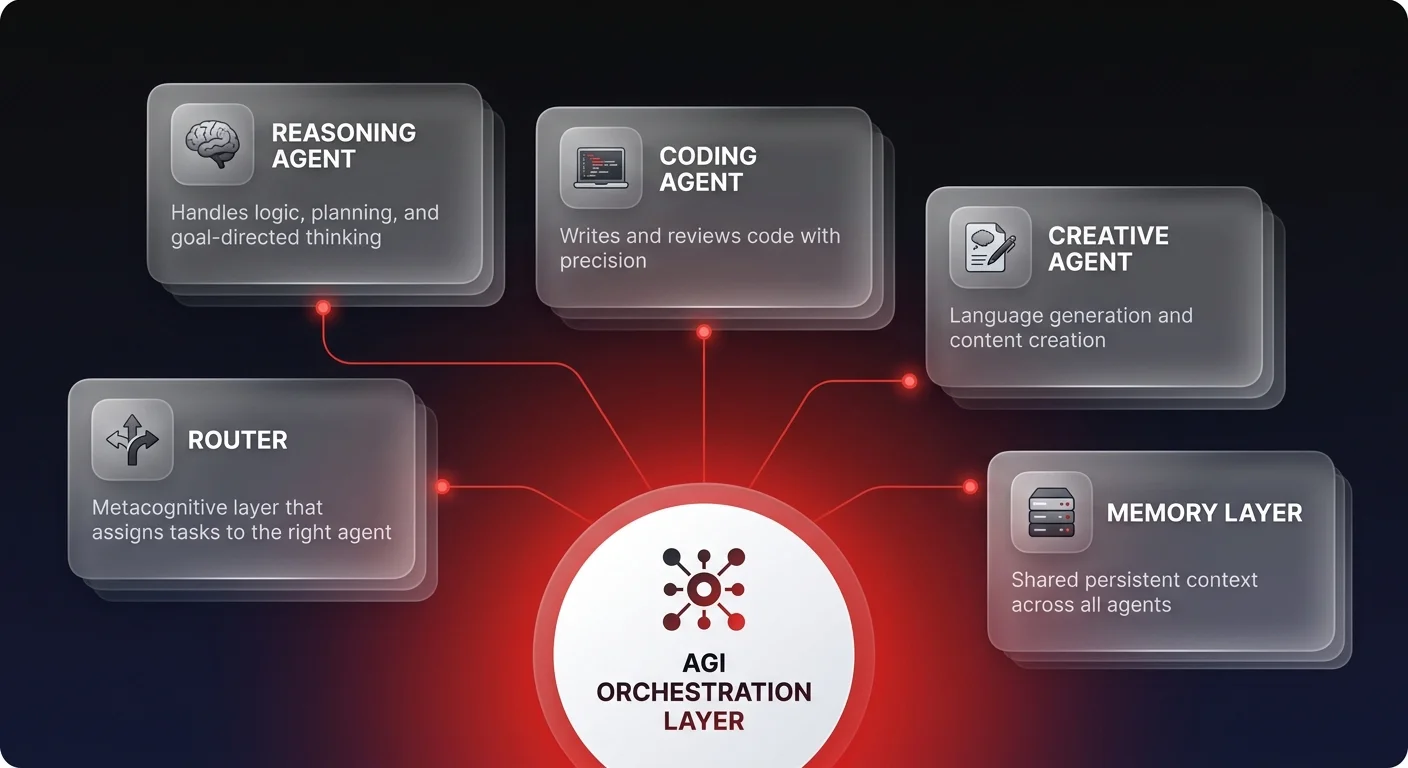

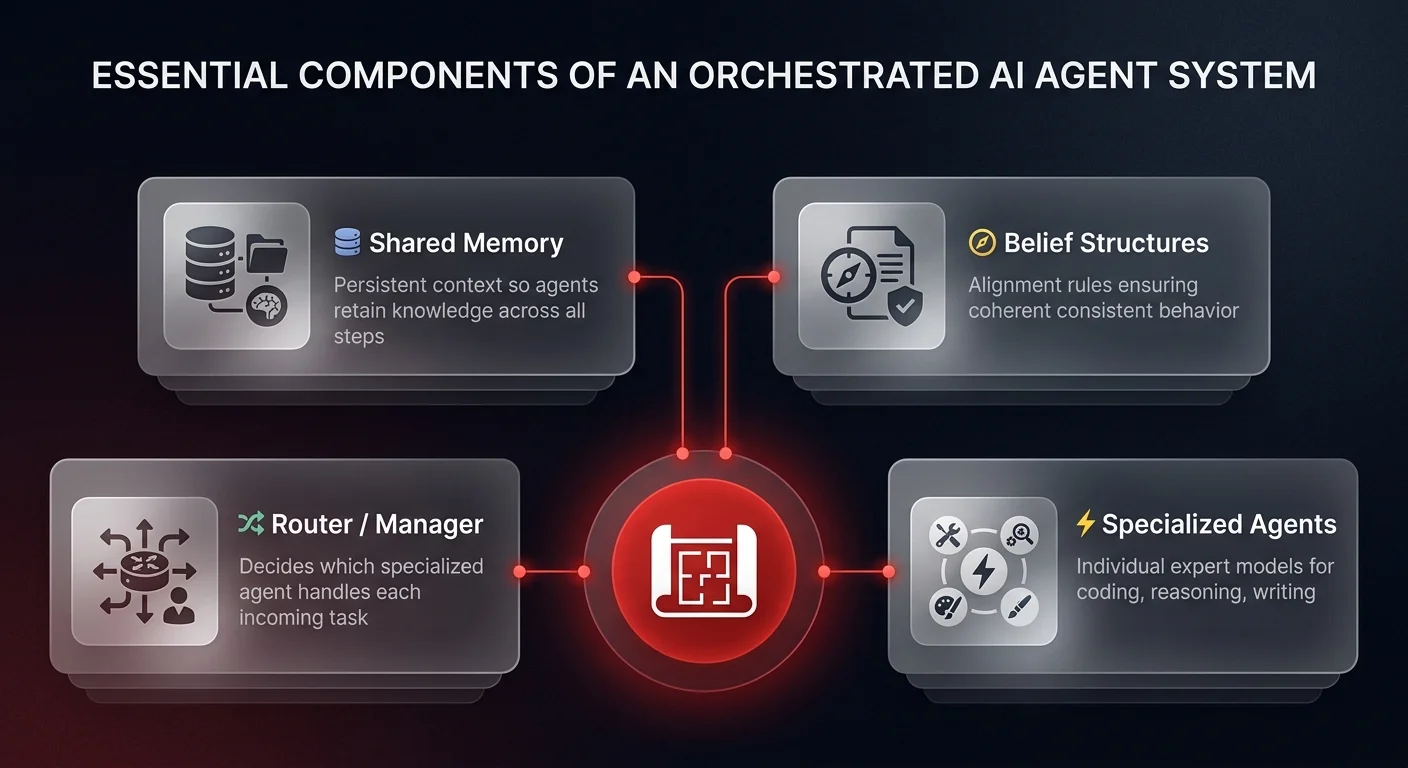

The 'general' in AGI comes from composition, not from a single general-purpose system. It comes from taking specialized agents - one expert at coding, another at reasoning, another at creative writing - and binding them together with a shared memory and belief system.

We are currently obsessed with the engines (the LLMs) when we should be focused on the car (the system). The underlying language models we have today are really good enough. We don't need GPT-7 to build incredible things. What we are missing is the metacognitive coordination layer that allows these specialized parts to function as a coherent whole.

So what does this mean for you right now?

So what does this mean for you right now? It means you need to flip the script on how you build AI solutions.

Instead of trying to prompt-engineer one massive model to do twenty different things poorly, you need to orchestrate a team of AI agents. This is an architectural challenge, not a training challenge.

You need to build shared memory systems so your agents have context. You need to establish belief structures so they have alignment. You need to design the 'router' or manager that decides which agent handles which task. This is where the real value is being created today.

The reality is that a well-orchestrated system of smaller, specialized agents will outperform a single massive model every time — a principle behind every operations automation system we build. It's high signal versus noise. It's precision versus generalization.

Stop waiting for the next model release to save you. The tools are already here. The builders who realize that intelligence is a composition problem, not a scale problem, are the ones who will own the future. The bottleneck is gone. The only limit now is your ability to architect the system.

This shift from monolithic models to orchestrated ecosystems is exactly what we focus on at Ability.ai. We don't just wrap APIs; we design the cognitive architectures that make AI agents actually work for business. If you're ready to stop waiting and start orchestrating real intelligence, let's talk.