AI software lifecycle management is the practice of using AI agents to own the entire development process — not just code generation, but testing, documentation, changelogs, and quality assurance. Most developers use AI like a smarter Stack Overflow, generating snippets and moving on. But this approach captures only 10% of the available value. By treating AI as an active partner across the full software lifecycle, teams eliminate documentation rot, reduce manual overhead, and let specialized sub-agents handle the administrative drudgery that kills engineering velocity.

Orchestrating the lifecycle

Here's what I mean when I talk about orchestrating the lifecycle.

I recently ran a live demo where an AI agent fixed a dark mode CSS issue. For most people, that's the end of the demo. The code works, move on. But here is the thing - the code is just one part of the equation. What happened next is where the real value lies. Because the system was instructed to take ownership, it immediately went and added the update to the changelog. It didn't stop there. It identified the appropriate feature flows and updated the documentation to reflect the new UI state.

This is the fundamental difference between a passive tool and an active, agentic partner. When you limit AI to simple code generation, you are ignoring the biggest headache in software development - consistency. We all know the pain of documentation rot. Code changes, but the docs stay the same, and suddenly your knowledge base is a liability. By integrating AI into the full lifecycle — a pattern we apply in our software development automation practice — you ensure that every change propagates through the entire system.

The AI understands the context of the project, not just the syntax of the function. It is not replacing the engineer; it is amplifying them by handling the administrative drudgery that usually kills velocity. You need to flip the script. Stop asking 'how can AI write this function?' and start asking 'how can AI maintain this product?'

Specialized sub-agents

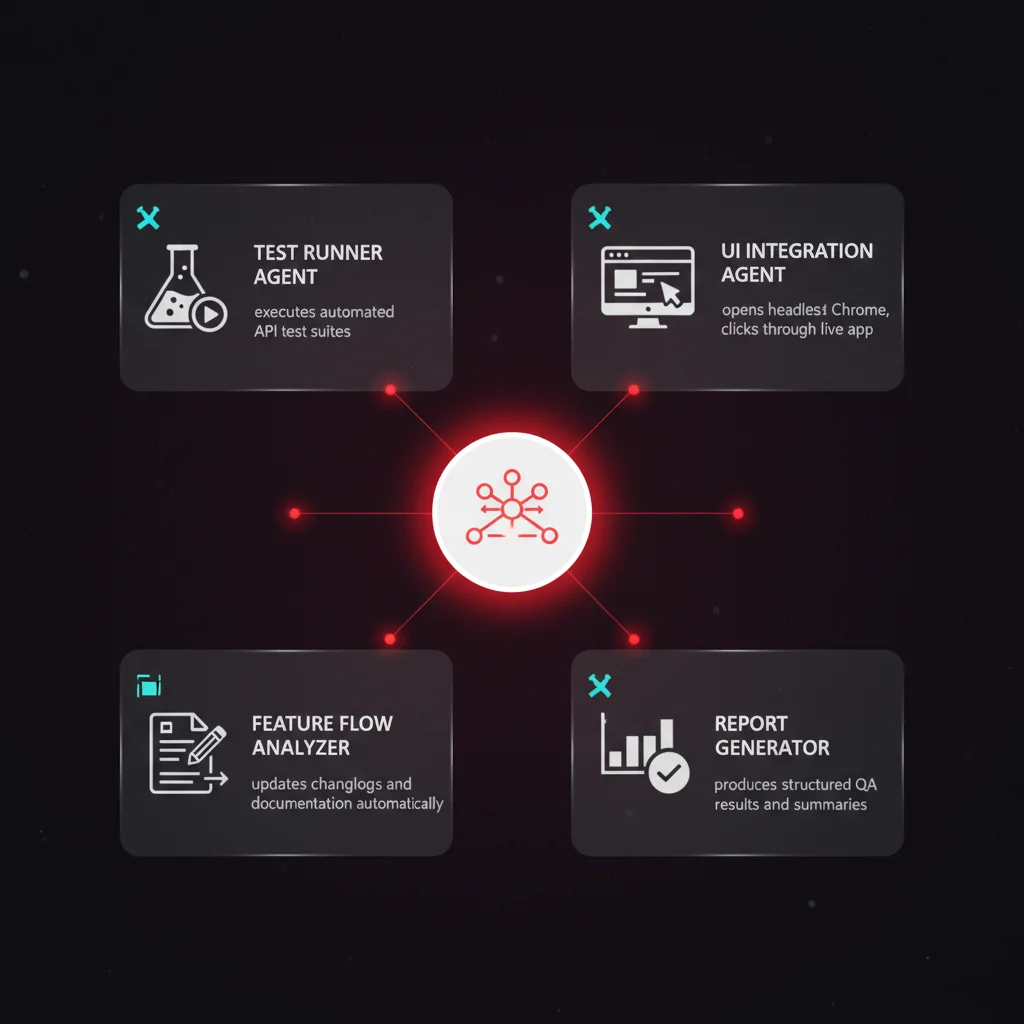

So, how do you execute this level of automation? The secret is not building one massive, all-knowing brain. It is about orchestrating specialized sub-agents.

In our stack, we do not rely on a single model to do everything. We have a specific 'test runner subagent' that handles API tests. There is a separate agent for UI integration tests - this guy effectively opens Chrome on its own and clicks through the local application to verify the user experience. We are even updating feature flows with a dedicated 'feature flow analyzer' agent.

This modular approach is critical. By splitting responsibilities, you reduce the noise for each model. The test agent does not need to know how to write the documentation; it just needs to know how to break the app. The documentation agent does not need to run the browser; it just needs to interpret the code changes.

The reality is that specialized agents perform better than generalists. Instead of creating a bottleneck with one model, you create a workflow where multiple agents collaborate. This is how you reach critical mass in automation. You move from a developer typing code to an architect directing a swarm of autonomous agents — and the winners will be those who build teams of agents, not just prompts.

Building self-sustaining systems

We are moving toward a world where you don't just write software; you manage the agents that build it. At Ability.ai, we help businesses transition from simple automation to full-lifecycle agentic orchestration. If you're ready to stop maintaining legacy code and start building self-sustaining systems, let's talk.