AI governance is a CEO responsibility because the risks of ungoverned AI — data leaks, regulatory fines, reputational damage from Shadow AI — land on the CEO's desk, not the IT department's. Attempting to ban AI is equally flawed; it drives usage underground. The solution is proactive governance that transforms AI from a hidden liability into a transparent, auditable strategic asset.

The generative AI boom isn't just a technological shift; it's a leadership crisis in the making for every CEO. While employees rapidly adopt AI tools, often without oversight in a phenomenon known as 'Shadow AI', a dangerous "governance gap" is widening. This unmanaged space between rapid AI adoption and the C-suite's responsibility ensures safe, ethical, and strategic use, leading to a widespread erosion of trust. As the stark reality suggests, "AI doesn't fail because it moves too fast. It fails when it scales without governance." For today's CEO, the central question is no longer if you will use AI, but how you will lead its integration. This makes AI decision transparency and governance the ultimate test of modern leadership.

The leadership mandate

For too long, the C-suite has mistakenly viewed AI governance as a technical problem, delegating it to IT. This approach is no longer tenable because the risks of ungoverned AI - from massive data leaks and regulatory fines to catastrophic reputational damage - are enterprise-level threats that land squarely on the CEO's desk. Attempting to ban AI is an equally flawed strategy. Prohibition is not governance; it merely drives usage underground, creating an unmanageable 'Shadow AI' ecosystem where employees use unsanctioned, often insecure, tools with company data. This not only heightens security risks but also places the organization at a significant competitive disadvantage. The real challenge is a strategic one, a vacuum of leadership around AI, not the technology itself. The conversation must shift from reactive damage control to proactive, strategic enablement. This is why the sharpest minds in the industry now argue that "AI governance isn't an IT checklist - it's a leadership mandate." The CEO's role is to set the vision and implement frameworks that transform AI from a hidden liability into a transparent, strategic asset.

From principles to practice

However, many organizations possess AI ethics principles - lofty statements about fairness, accountability, and transparency often living only in a slide deck. The gap between these principles and what's actually happening in production is where risk truly flourishes. This urgent need is to move from governance-on-paper to evidence-based governance, directly embedded into your technical and operational workflows. Practitioners on the front lines grapple daily with this "production gap," complaining that AI agents are often brittle, insecure, and incredibly difficult to govern. Translating a high-level policy like "ensure customer data privacy" into machine-enforceable rules that an AI agent cannot circumvent is a massive technical and operational bottleneck.

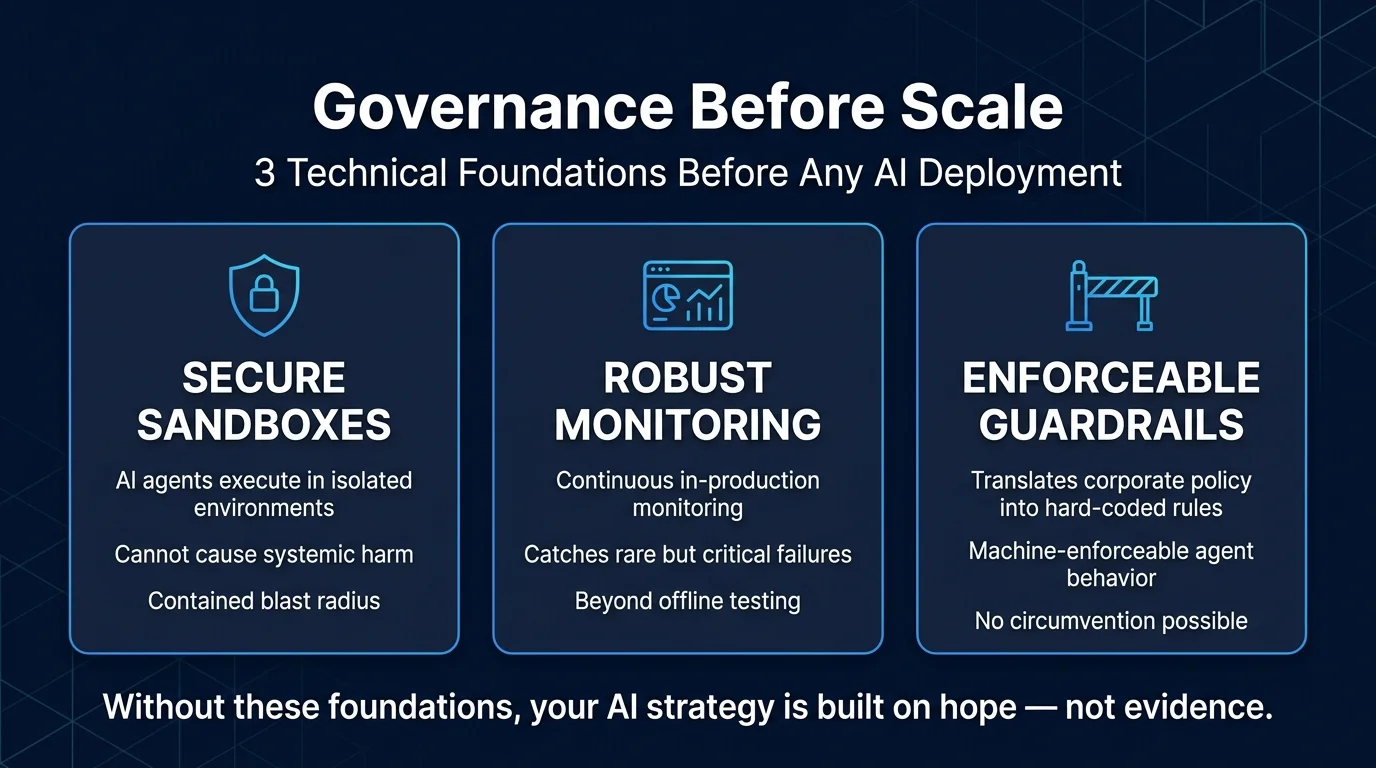

Governance before scale

This is why the mantra must be: "Governance must come before scale." Before you roll out that new customer-facing chatbot or internal data analysis tool, you must have the systems in place to prove it is safe, unbiased, and reliable. This involves establishing Secure Sandboxes, ensuring AI agents that execute code do so in isolated environments where they cannot cause systemic harm. It also demands Robust Monitoring, moving beyond offline testing to continuous, in-production monitoring to catch rare but critical failures, and Enforceable Guardrails, implementing technical systems that can translate high-level corporate policies into hard-coded rules for AI behavior. Without these foundational pillars, your AI strategy is built on hope, not evidence. And hope is not a risk management strategy. See how Ability.ai implements governance-first AI deployments for mid-market companies.