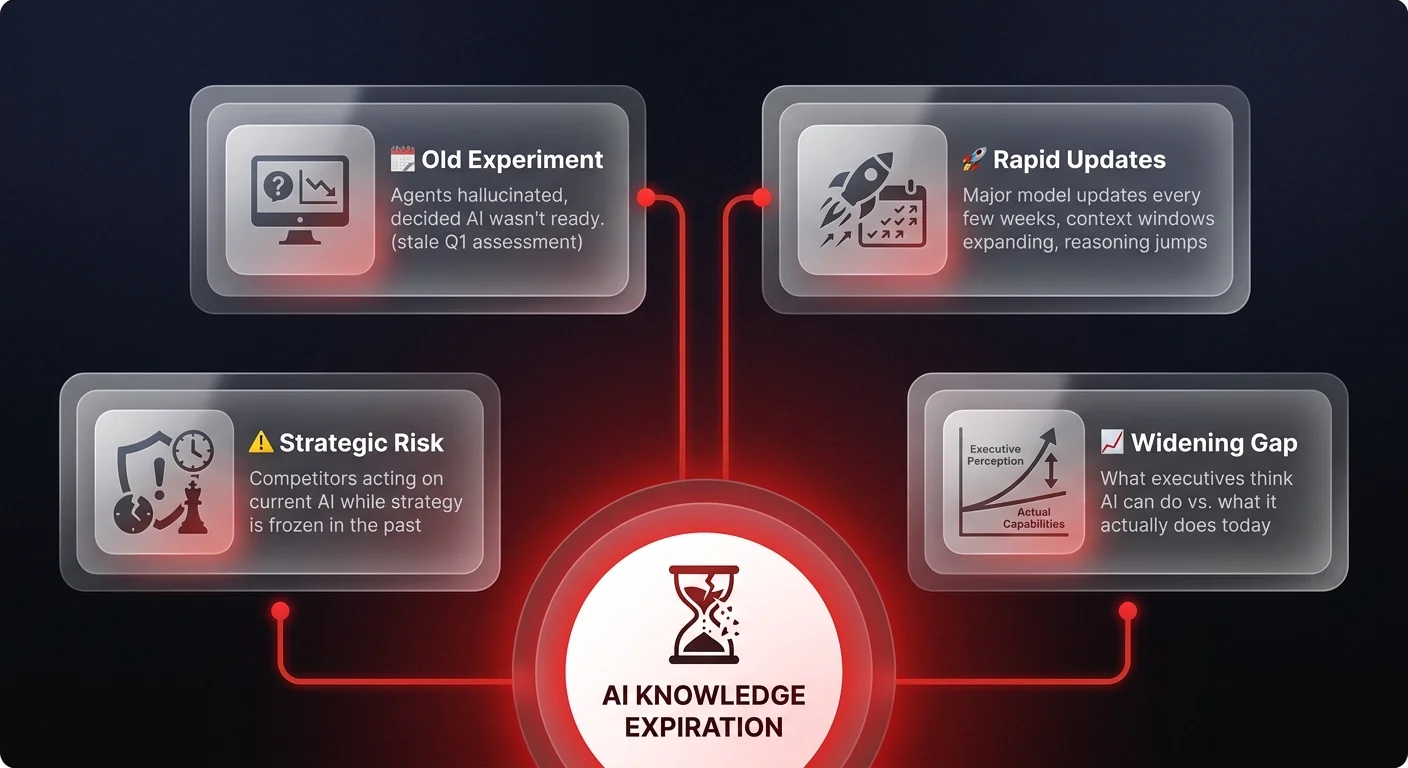

AI knowledge expiration risk is the strategic danger of making business decisions based on an outdated understanding of AI capabilities. Major model updates now arrive every few weeks — context windows expand, reasoning improves, and agentic frameworks mature rapidly. An AI evaluation from six months ago may have little bearing on what the technology can do today, and executives who rely on old experiments risk being lapped by competitors acting on current intelligence.

I talk to executives every week who say, "We tried agents, but they hallucinated too much." And I have to tell them — that was true in January. Today, the game has changed completely. Making strategic decisions based on half-year-old data isn't just cautious; it's negligent. You are likely dismissing tools that could radically amplify your business right now.

Knowledge expiration

Here's what I mean when I say your knowledge is expiring faster than milk.

I constantly encounter a specific pattern in my calls. Smart, successful leaders come to the table with a firm stance: "We tried building an agent six months ago. It couldn't handle complex reasoning, so we paused."

They aren't wrong about what happened then. They are wrong about what is possible now.

If you aren't literally working with agents on a daily basis - looking at the code, testing new models, integrating the latest frameworks - there is zero chance you are up to date. The velocity of change in the AI stack is unparalleled. What was impossible or hallucination-prone in Q1 is standard, reliable functionality in Q3.

This creates a massive strategic risk. Executives are rejecting viable, high-impact automation because their mental model of the technology is frozen in time. They are orchestrating their 2025 strategy based on the limitations of early 2024.

Think about it. We are seeing major model updates every few weeks. Context windows are expanding, reasoning capabilities are jumping, and agentic frameworks are maturing overnight. If your "experiment" from six months ago is your benchmark, you are effectively betting against the fastest moving technology in history based on old intelligence.

The reality is that you cannot trust your own past experience here. Unless that experience is from last Tuesday, it's likely already stale. The gap between "what I think AI can do" and "what AI can actually do" is widening every day for those who aren't in the trenches.

Managing the velocity

So the question is - how do you actually manage this? You have a day job. You're focused on profitability, growth, and managing your teams. You cannot reasonably be expected to spend four hours a day reading arXiv papers or testing the latest agent frameworks.

But you also can't afford to be blind.

First, stop treating AI adoption as a one-off experiment. It is not a project you pass or fail and then move on from. It is a continuous stream. Instead of asking "Does AI work?", you need to ask "Does it work yet?" and check back frequently.

Second, recognize that you cannot own this knowledge entirely in-house unless you have a dedicated R&D team doing nothing else. You need high-signal partners who live and breathe this stuff. You need people who can tell you, "Yes, that was impossible in March, but we solved it in July using this new approach."

Third, you need to radically shift your mindset regarding implementation. The goal isn't to build a perfect static system. It's to build an architecture that can absorb new capabilities as they arrive. We are moving from a world of rigid software to fluid, agentic workflows that update as the underlying models improve.

If you continue to make decisions based on the ghost of AI past, you will get lapped by competitors who are leveraging the AI of today. The status quo is comfortable, but in this market, it's a death sentence.

Don't let a failed experiment from six months ago block the transformation your business needs today.

Implementing systems that work

The technology isn't waiting for you to catch up. At Ability.ai, we don't just watch the market; we build the agents that define it. If you're ready to stop relying on outdated assumptions and start implementing AI operations automation that actually works right now, let's talk. We help you cut through the noise and orchestrate real business transformation.