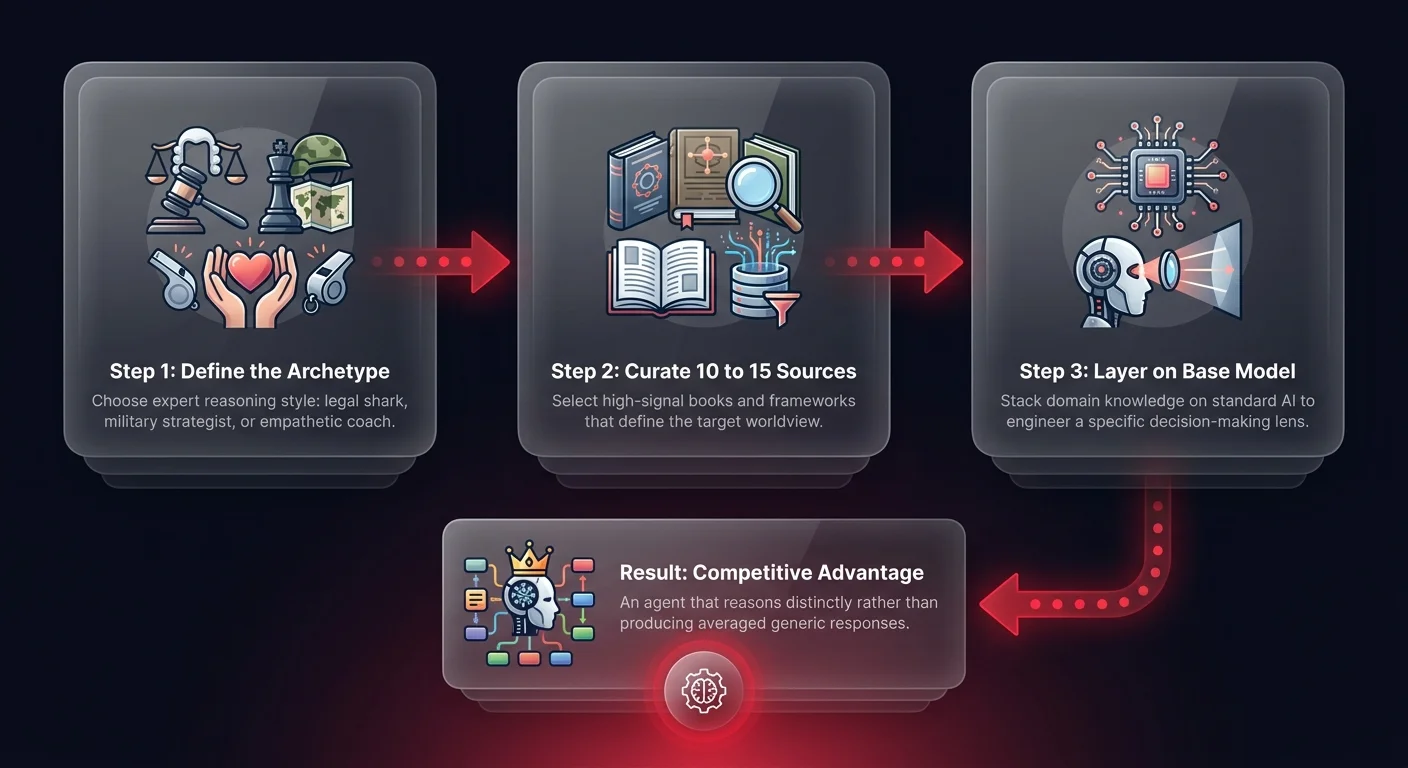

Opinionated AI agents are AI systems engineered to reason like domain experts by curating specific knowledge sources that define a consistent decision-making worldview. Unlike standard RAG systems that retrieve facts from dumped documents, opinionated agents layer targeted domain literature — legal strategy, military doctrine, sales psychology — on top of a base model, producing distinct perspectives rather than generic, averaged responses. The competitive advantage lies entirely in how you curate that knowledge stack.

Engineering personality

Let me share a concrete example from my own experiments to show you what I mean. I created an agent I call 'General Monroe.' I took my standard 'Second Brain' - the base layer of knowledge I use daily - and I orchestrated a radical shift. I didn't just add more data. I layered on 10 to 15 specific books focused on military management, critical thinking, and strategic warfare.

The result wasn't just an agent that knew about war history. It was an agent that thought like a strategist. When I ask General Monroe a business question, it doesn't give me the vanilla, safe corporate answer you get from a standard LLM. It analyzes the problem through the lens of military doctrine. It looks for supply chain vulnerabilities, flanking maneuvers in the market, and defensible positions.

This moves the industry conversation from 'RAG for information retrieval' to 'RAG for opinionated reasoning.' We need to stop building agents just to know things and start building them to think like specific archetypes. It's about creating a consistent characteristic in the decision-making process. The agent becomes opinionated in a specific, predictable way because you've curated the mental models it uses to process reality.

Replicating the approach

So how do you replicate this? You need to think about the 'recipe' for the mind you're trying to build. It's about finding the optimal combination of knowledge sources that define a worldview.

Instead of just feeding your agent raw data, feed it the frameworks you want it to emulate.

If you want a legal shark, you don't just feed it case law; you feed it aggressive negotiation tactics and debate theory. If you want an empathetic support lead, you layer in psychology and conflict resolution literature.

Here is the hard truth - generic models are a commodity. Everyone has access to the same base intelligence. The competitive advantage comes from how you curate the 'stack' of influences on top of that base — which is why well-designed AI agents built on curated knowledge outperform off-the-shelf solutions. You are essentially engineering a personality by selecting the high-signal literature that the agent prioritizes.

Don't just dump data. Curate the reasoning framework. By doing this, you amplify the agent's ability to solve specific types of problems. You move from a tool that retrieves information to a partner that offers a distinct, valuable perspective. That is how you own the outcome.

Defining the right personality

Are you still building generic chatbots, or are you ready to orchestrate opinionated agents that actually drive business results? At Ability.ai, we help you design agentic workflows with distinct reasoning capabilities. Let's define the right personality for your AI workforce.