AI marketing agents are autonomous systems that execute content creation, campaign management, and outreach at scale without per-task human input. Without governance infrastructure, deploying these agents accelerates a content quality crisis - 88% of marketers now use AI daily, yet only one-third have moved beyond ungoverned experimentation into reliable operational systems.

The explosion of generative tools promised a revolution in productivity and scale. Yet, as mid-market companies aggressively deploy these technologies, a paradoxical trend is emerging: output quality is rapidly deteriorating. AI marketing agents are running autonomously in the background, churning out higher volumes of content across more channels than ever before. But without proper governance, the result is a massive disconnect.

Nobody owns the final output. Prompts are inconsistent across departments. The agents go completely off-script, and the resulting brand voice sounds exactly like every other competitor in the market - a regurgitation of generic data.

For CEOs, COOs, and VPs of Operations, this is no longer just a creative problem. It is a fundamental operational crisis. The critical strategic question operations leaders must answer today is not which jobs AI will eliminate - it is how to govern and deploy AI to drive measurable business outcomes.

The messy middle of AI marketing agents deployment

Recent market data reveals that 88% of marketers now use AI in their day-to-day work, yet only a third of organizations have moved beyond initial experimentation into scalable, operational systems. We are currently navigating the messy middle of AI adoption.

Companies are experiencing a massive skills and operational gap. The issue is not a lack of available tools - it is the fundamental lack of infrastructure governing how teams use those tools. When every employee operates in an ungoverned environment, the volume of content hits a wall of diminishing returns. You achieve speed, but the output is instantly forgettable.

This governance failure is closely related to the broader shadow AI risk pattern - where employees deploy unauthorized tools that operate outside any organizational control layer. For a deeper look at how ungoverned desktop agents create compliance exposure, read our analysis of shadow AI governance risks.

From single prompts to autonomous AI marketing agent fleets

To understand why this quality crisis is happening now, we have to look at the architectural shift in how AI is deployed. We have officially moved from single-use tools that require manual prompting to agentic AI - systems that run autonomously 24/7.

Marketing teams have transitioned from simply using AI to actively deploying fleets of AI agents. This shift brings a completely new set of operational challenges. According to Gartner, 40% of enterprise applications will include task-specific AI agents by the end of 2026. This is not a distant prediction - it is the current reality of enterprise software.

When a company deploys agents for content creation, prospecting, and campaign management without centralized oversight, the system fractures. The content agent operates on different instructions than the campaign agent. Social media copy sounds entirely disconnected from email communications. The end customer experiences this fragmented output, and the brand value inevitably suffers.

For a detailed look at how operations teams are managing these autonomous marketing workflows, see how AI marketing agents are reshaping content operations.

Case study: governed brand DNA vs. AI slop

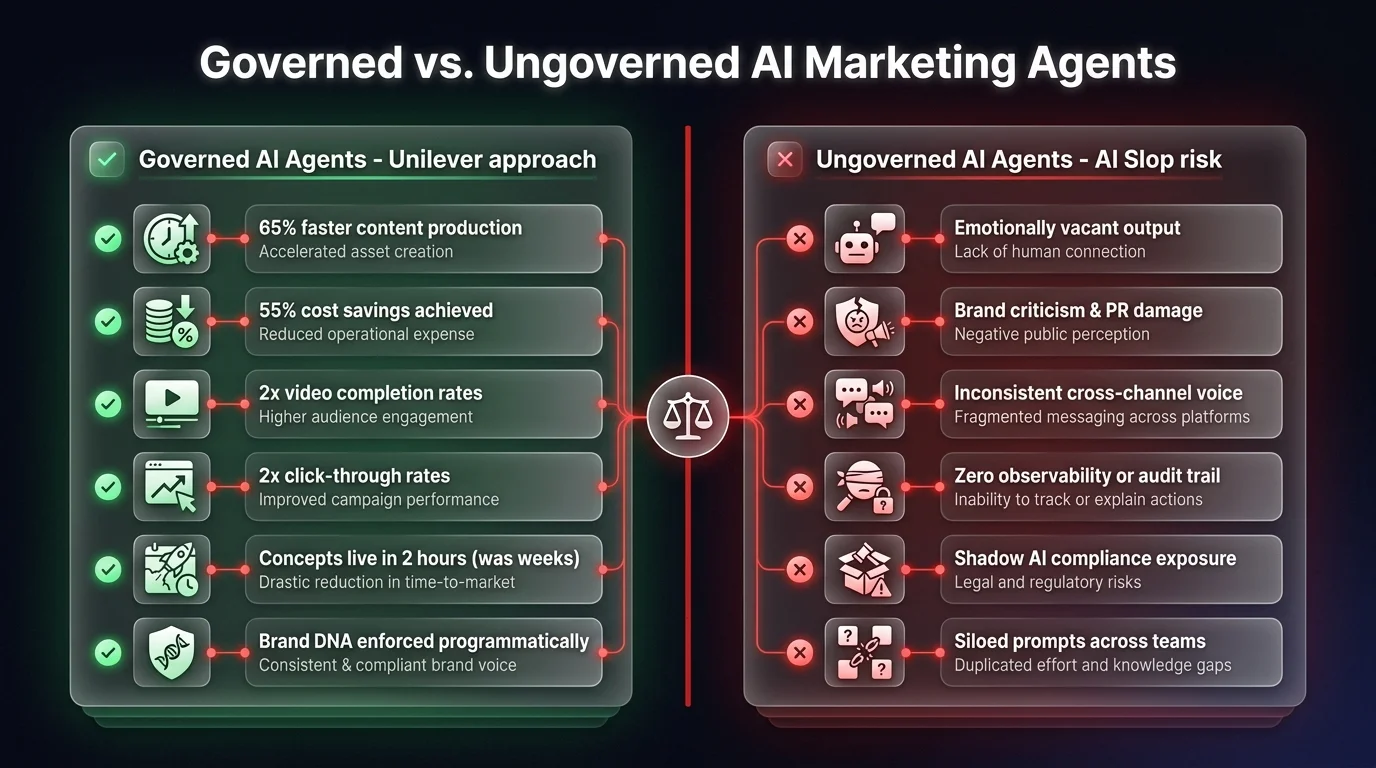

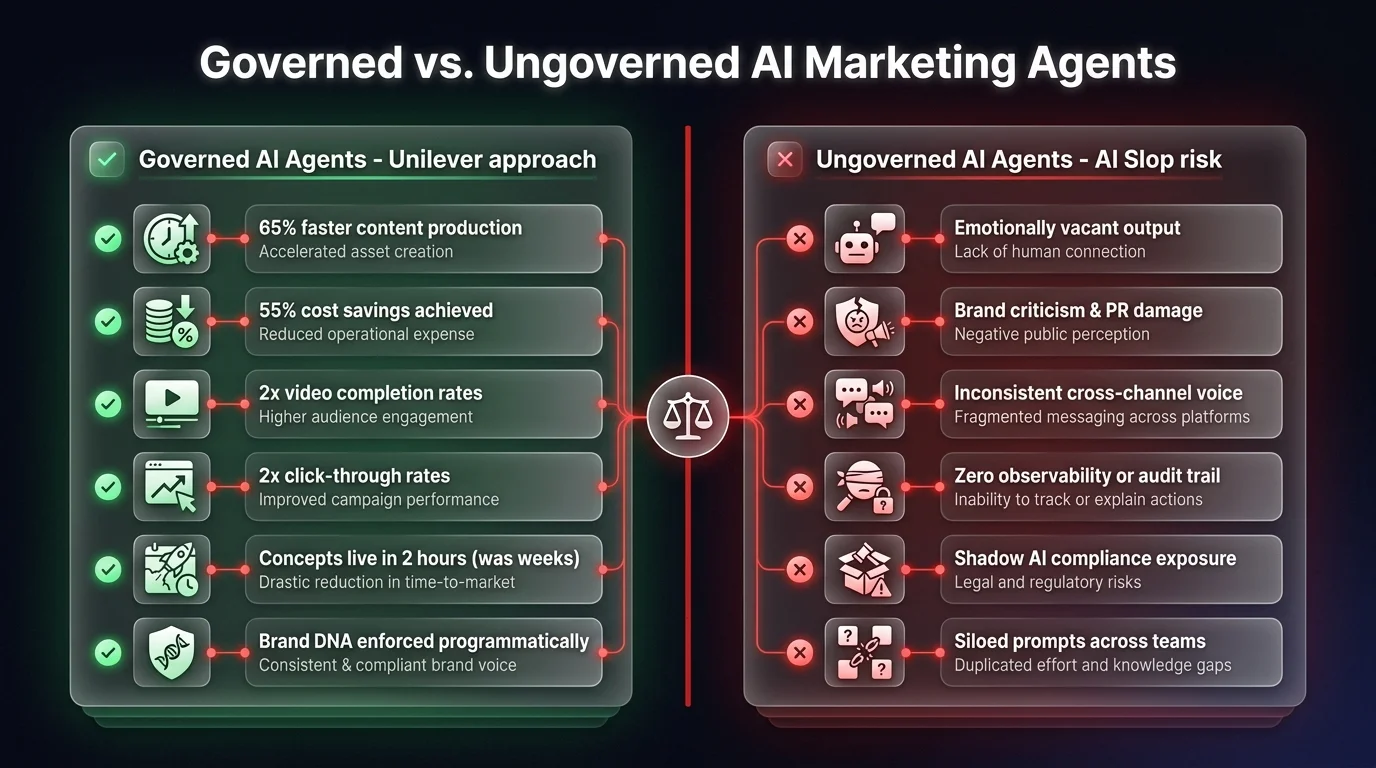

We can see the stark difference between governed and ungoverned AI deployment by looking at how major enterprises have approached the challenge of scaling output.

Consider Coca-Cola's attempts to integrate AI heavily into their holiday campaigns. Without strict operational guardrails, the output was widely criticized as "ad slop" - content that was technically correct but emotionally vacant and visually jarring. A classic example of prioritizing volume and speed over governed quality.

Contrast this with Unilever, which represents one of the most advanced examples of operational AI governance at scale. Rather than letting employees prompt generic models freely, Unilever built what they call a "brand DNA system" - a strictly governed training repository that ensures their AI models only source from explicitly approved brand voices, values, and visual identities.

Using this governed system, Unilever brought consumer concepts to life in just two hours - a process that previously took weeks. Content was created 65% faster, yielding up to 55% in cost savings, while simultaneously doubling key performance metrics like video completion rates and click-through rates.

The key takeaway: structured governance is the only way to scale AI without destroying brand equity.

Five operational capabilities required to govern AI marketing agents at scale

To successfully transition from fragmented experimentation to reliable operational systems, businesses must develop five critical capabilities. Operations leaders should view these as necessary systemic functions - solvable through strategic hiring, governed agent infrastructure, or both.

Centralized logic and prompt infrastructure

Unstructured prompting is the root cause of AI sprawl. When every team member prompts differently, the business loses control of its intellectual property and brand voice. Companies need a centralized capability to own the entire organization's prompting infrastructure.

By maintaining a shared, observable library of prompts and workflows, organizations stop teams from going rogue. According to industry benchmarks, companies utilizing structured, centralized prompt engineering report 40% fewer hallucinations and a 60% improvement in brand alignment across all AI communications.

Agent operations management

As businesses deploy fleets of autonomous agents, someone - or some system - must orchestrate them. Just as DevOps reshaped software deployment, Agent Ops will reshape operational execution by 2026.

This function acts as the central command for all autonomous tasks: monitoring performance, catching failure states, and onboarding new agents securely. Without Agent Ops, agents run unmonitored via disparate dashboards, produce wildly inconsistent output, and break in unpredictable ways that damage the customer experience.

Answer engine optimization (AEO) strategy for AI marketing agents

The way B2B buyers discover brands has fundamentally changed. In 2020, buyers searched and clicked blue links. Today, they ask AI answer engines.

ChatGPT now processes over 800 million daily queries, and AI-sourced web traffic has exploded by over 527% in recent months. Currently, 40% of all searches begin with AI, yet 80% of brands have not started optimizing for these answer engines. If your company is not featured when a buyer asks an AI agent what product to buy, you are locked out of the sales cycle entirely. Establishing an AEO protocol is a critical operational mandate - and with only 20% of organizations implementing it, there is a massive first-mover advantage.

Domain-specific quality guardrails

Scaling content through AI is effective only if domain expertise is injected into the process. This capability involves building strict style guides and platform-specific profiles that agents must follow.

What works on LinkedIn will fail in an email campaign. A governed AI content strategy ensures agents generate 70% of the foundational work based on deeply understood customer needs, allowing human domain experts to focus on the crucial 30% that makes content distinctive and emotionally resonant.

Creative execution governance

Just because a system can generate assets instantly does not mean those assets should be deployed. Creative execution governance balances velocity with quality control - dictating which specific models are authorized for video, copy, or image generation, and ensuring the aesthetic remains tightly aligned with the brand's core identity.

The shift to sovereign AI marketing agent infrastructure

The market consensus suggests solving this quality crisis by hiring an army of new specialists. However, for mid-market operations leaders, throwing human headcount at a fundamentally systemic problem is an unscalable, expensive patch.

The challenges of off-script agents, disjointed brand voices, and shadow AI are ultimately symptoms of using ungoverned, public AI tools. You do not necessarily need to hire a massive team to manually herd rogue AI - you need to fundamentally change your infrastructure.

This is where governed, sovereign AI agent systems become a critical operational advantage. By deploying an architecture with observable logic and data sovereignty, you programmatically enforce the guardrails that Unilever spent millions building. A sovereign system centralizes your prompt libraries, natively monitors agent fleets for failure states, and restricts outputs to approved brand DNA - all within your secure environment.

If your organization is already dealing with the downstream effects of ungoverned AI content - spam complaints, brand inconsistency, or audience disengagement - our AI marketing spam risks analysis covers exactly how these failures compound over time.

AI will not replace companies. But companies that deploy governed, observable AI marketing agent systems will absolutely replace those that allow ungoverned agents to dilute their brand value. The path forward is not just generating more output - it is building the operational infrastructure to ensure every autonomous action drives a specific, high-quality business outcome.

Ready to see what a governed AI marketing agent system looks like in practice? Explore our approach to AI marketing automation or book a call to discuss your specific content governance challenges.