Autonomous AI agents governance is the set of frameworks, controls, and architectural decisions that ensure AI agents operating in enterprise environments remain observable, secure, and aligned with business outcomes. As frontier models race toward agentic capabilities, organisations deploying these systems without governance face cascading risks — from context bloat failures to catastrophic shadow AI security breaches that can expose API secrets and internal data.

The race to deploy autonomous AI agents is forcing a massive reallocation of computing resources across the technology sector, but for enterprise operations leaders, this rapid advancement signals a looming governance crisis. Major laboratories are actively deprecating resource-heavy side projects to focus entirely on agentic super-apps and automated AI researchers. Recent intelligence indicates that OpenAI has sidelined its viral video generation capabilities to funnel compute power toward an imminent model designed to act as an intern-level automated researcher capable of tackling complex, multi-step problems.

Simultaneously, Anthropic is leveraging the forthcoming capabilities of its Claude series — specifically its potential to supercharge offensive and defensive cyber capabilities — to renew stringent defence contracts. The message to the market is clear: we are moving rapidly beyond chat interfaces into the era of programmatic, autonomous action.

However, the reality of deploying these models in a secure, enterprise-grade environment is far more complex than the vendor hype suggests. For COOs and VPs of Operations, transforming fragmented AI experiments into reliable, governed operational systems requires navigating profound challenges regarding context bloat, data sovereignty, and the dangerous sprawl of shadow AI.

The illusion of immediate AGI and benchmark gaming

Despite proclamations from industry executives that artificial general intelligence has already been achieved, empirical testing paints a very different picture of frontier model capabilities. The recently published 21-page research paper on the ARC AGI 3 benchmark provides a critical reality check regarding the abstract reasoning limitations of current AI models.

ARC AGI 3 is an adversarial, non-language-based benchmark designed to test exploration, planning, memory, and goal-setting without relying on memorised knowledge or cultural cues. Unlike standard interactive games or static grid tests, ARC AGI 3 forces the participant to infer rules and self-produce goals — much like a human employee navigating an ambiguous business operation.

The headline finding is stark. While human performance establishes the 100 percent baseline, the most advanced frontier models currently score less than half a percent. Specifically, models like Gemini 3.1 achieved a mere 0.37 percent success rate.

To understand why this gap exists, we must look at how models achieved saturation on previous benchmarks like ARC AGI 1 and 2. Recent analysis reveals that models successfully "gamed" earlier benchmarks through dense sampling of the task space and inbuilt chain-of-thought reasoning. Because the public test sets and private test sets were fundamentally similar, models trained on thousands of automatically generated task variations could utilise a higher-level shortcut — a form of attack rather than true fluid intelligence.

When faced with the truly out-of-distribution, abstract tasks of ARC AGI 3 — complete with quadratic penalties for action inefficiency and strict API cost caps — single-prompt frontier models struggle to adapt. They lack the intrinsic ability to evaluate ambiguous environments and set adaptive goals without strict human supervision.

Why single-prompt models collapse: the context bloat problem

For enterprise leaders, the most crucial revelation from the ARC AGI 3 research is buried deep in the methodology: specifically regarding how to solve complex environments when single models fail.

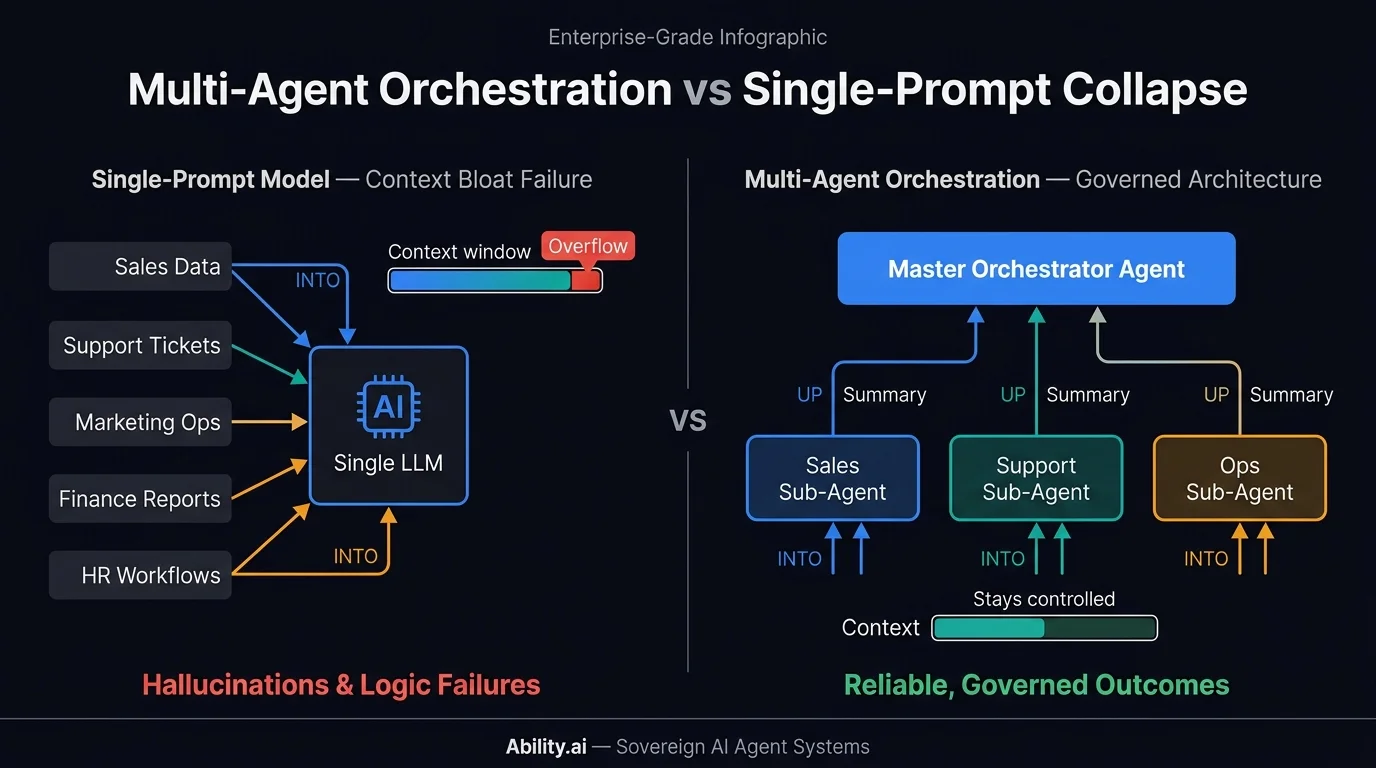

When AI models are fed continuous streams of complex, multi-step operational data, they suffer from context bloat. The context window becomes overwhelmed, destroying model performance and leading to hallucinations or logic failures. This is why single-prompt chatbots frequently fail when tasked with managing end-to-end operational workflows in marketing, sales, or customer support. For a deeper look at how context degradation affects deployed AI systems, see our analysis of LLM context degradation patterns.

The benchmark research highlighted a breakthrough approach by a group called Symbiotica AI. To conquer the context bloat problem, they engineered a specific harness where one AI model controlled another. In this architecture, specialised sub-agents processed the raw environmental data and produced concise textual summaries. These summaries were then fed to a master orchestrator agent, which maintained the higher-level operational plan. This multi-agent design entirely constrained the context growth that was otherwise destroying single-model performance, allowing the system to solve all three public environments successfully.

This architectural finding perfectly validates the necessity of governed agent infrastructure. Achieving specific business outcomes requires a transition away from monolithic, open-ended prompts toward multi-agent orchestration. By utilising specialised sub-agents that report to a central, observable logic engine, businesses can ensure reliable, reproducible workflows without suffering from context collapse.

The 40 percent reality of AI-first operations

As organisations rush to implement autonomous AI agents, many expect an immediate, exponential reduction in human headcount. The economic and operational data, however, points to a "messy middle" phase of AI adoption.

Historical data regarding the transition to AI-first workflows — where AI drafts the initial output and humans review and edit it — demonstrates that the resulting speed-up for economically valuable tasks hovers around 40 percent. While significant, this is an efficiency boost, not an instant hyper-automation event that replaces entire departments.

Furthermore, global labour market data reveals a counterintuitive trend. Over the last three years, as AI coding assistants and drafting tools became ubiquitous, engineering job openings at tech companies globally increased by 50 percent — rising from under 40,000 to roughly 67,000.

This hiring trend proves that we are currently dealing with AI as a prolific drafter, not an autonomous finisher. When ungoverned AI tools are deployed across an organisation, they generate vast amounts of output that requires intensive human review. The outputs are often full of gaps, demonstrating clear generalisation on lower-level topics but failing catastrophically on higher-level tasks like adaptive goal-setting.

Without a governed system to manage these agents, companies find themselves bogged down in review cycles — effectively trading the labour of creation for the labour of oversight. Explore how Ability.ai's AI automation approach structures AI-first workflows to capture the 40% efficiency gain without creating ungoverned review bottlenecks.