Custom AI agents are purpose-built automation workflows that let operations teams deploy functional minimum viable products in under an hour - bypassing traditional multi-month software procurement cycles entirely. Instead of writing lengthy project proposals, business leaders now prototype bespoke applications using frontier models like Claude Code and validate operational value before any budget commitment.

In the modern enterprise, the traditional software procurement cycle is being bypassed entirely. Operations leaders are witnessing a fundamental shift in how teams solve problems and build workflows. The catalyst for this change is the rapid deployment of custom AI agents. Instead of writing lengthy project proposals or requesting budget for niche SaaS point solutions, business leaders are live-building functional minimum viable products (MVPs) to automate complex tasks.

Recent industry experiments demonstrate exactly how fast this acceleration is happening. In under forty minutes, marketing professionals are now able to architect, prompt, and deploy bespoke applications - like a fully automated creator discovery hub - using tools like Perplexity Computer and Claude Code.

For VPs of Operations and COOs, this presents a unique duality. The speed to value is unprecedented, but the resulting tool fragmentation requires a new operational framework. Here is a deep dive into how teams are building bespoke AI workflows, and what it means for enterprise governance.

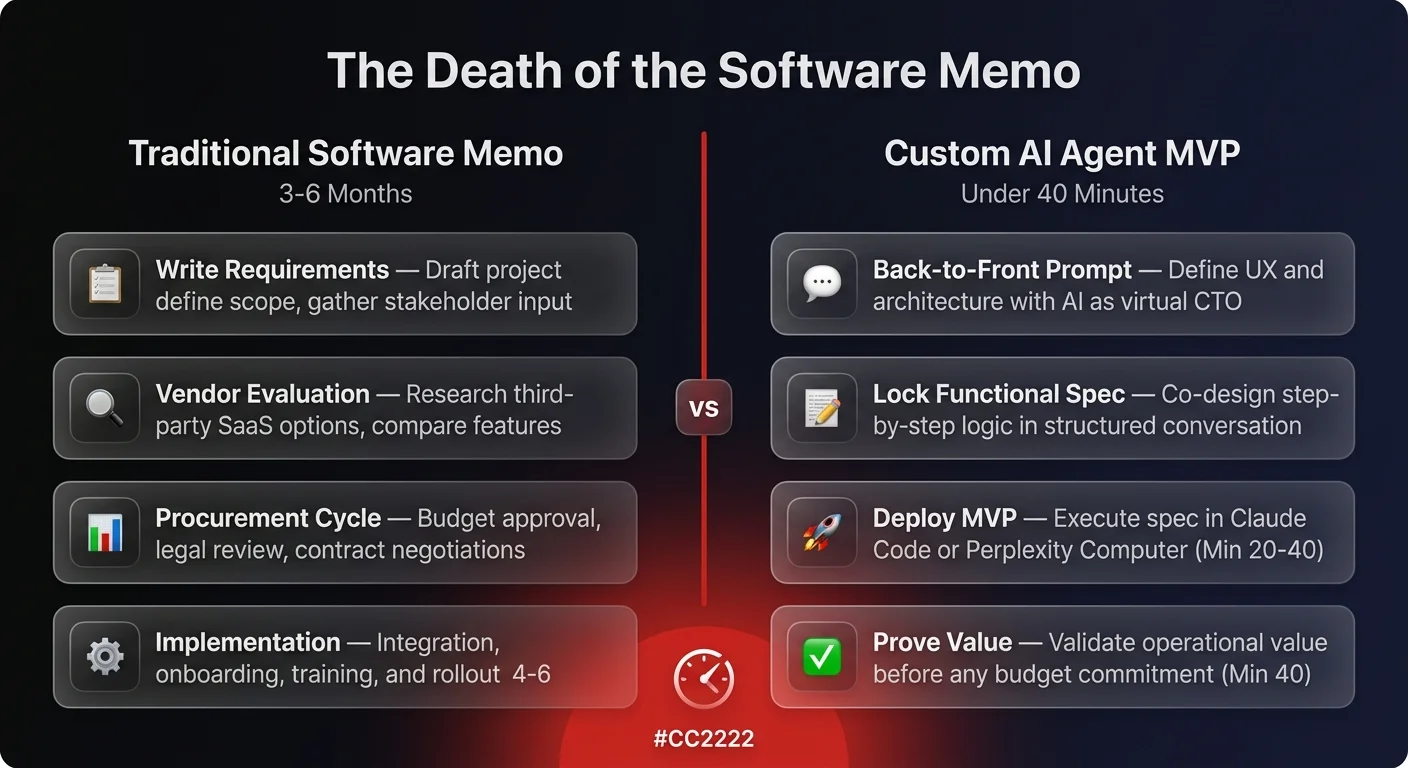

The death of the software project memo

Historically, identifying a workflow bottleneck resulted in a predictable corporate process. A team would draft a memo, define the requirements, evaluate third-party vendors, and endure a multi-month procurement cycle.

Today, that cultural norm is disappearing. The new standard is prototyping and MVPing solutions instantly so stakeholders have a tangible application to evaluate. If the prototype proves valuable, the organization can then decide to invest in scaling it.

Take the example of building a partner or creator discovery tool. Rather than buying a bloated influencer marketing platform, teams are deploying custom agents that execute highly specific sequences:

- The user inputs a target company domain.

- The agent researches the company to establish the exact buyer persona.

- It stack-ranks relevant social platforms (like LinkedIn or Reddit) based on where that specific buyer consumes information.

- It discovers notable creators and thought leaders on those platforms, filtering out irrelevant accounts.

- It drafts highly personalized outreach proposals and queues them directly in Gmail.

This entire application can be conceptualized and deployed in a single afternoon. The MVP replaces the memo, allowing teams to prove operational value before asking for budget.

Back-to-front workflow architecture for custom AI agents

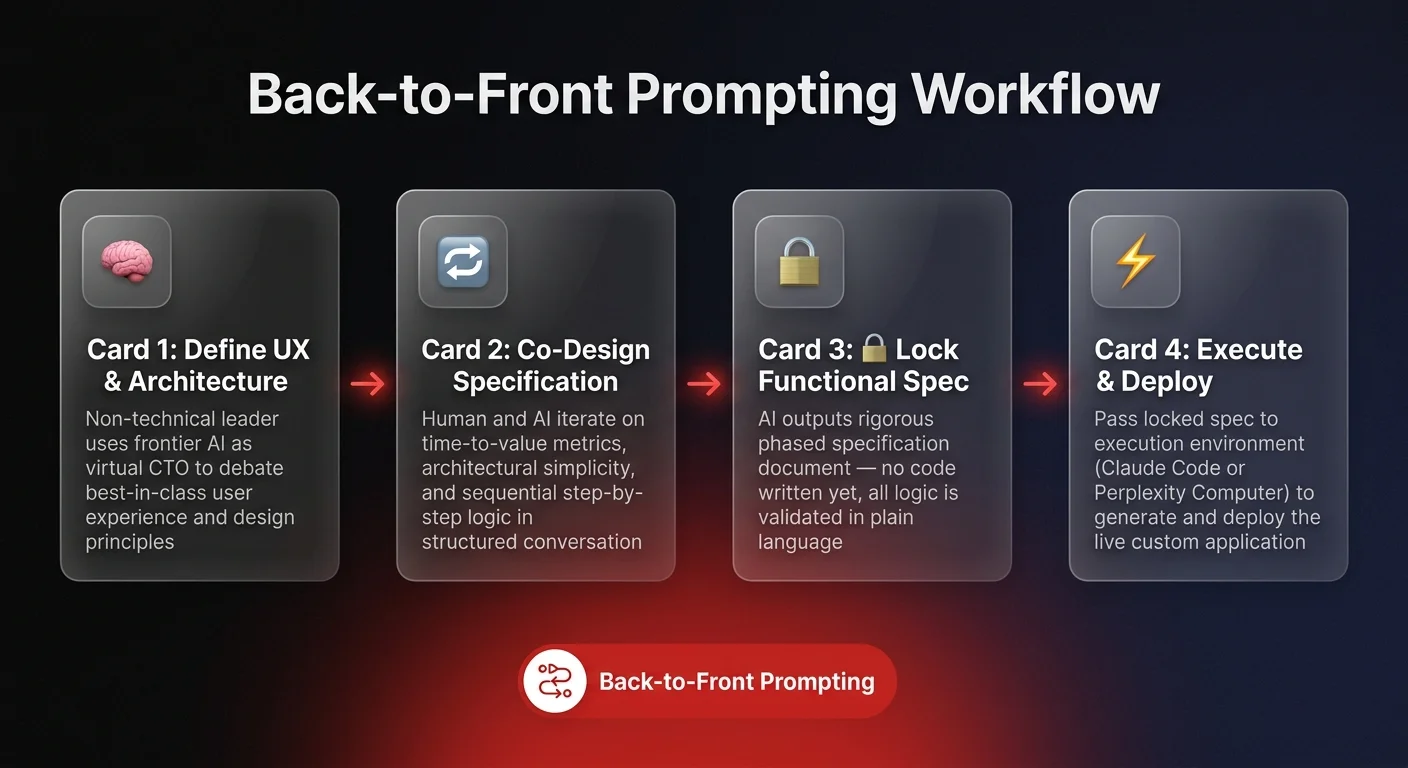

The secret to building effective operational tools without traditional engineering backgrounds lies in a technique called back-to-front prompting.

When non-technical leaders attempt to build custom AI agents, they often make the mistake of jumping straight into execution, giving the model a massive list of disparate tasks. The optimal workflow is entirely conversational and deeply structured.

Before writing a single line of code or deploying an application environment, power users leverage frontier models like Claude Opus to act as an elite Chief Technology Officer. The human and the AI go back and forth to debate best-in-class user experience principles, time-to-value metrics, and architectural simplicity.

The goal is to force the AI to output a rigorous, phased functional specification document. By aligning on the sequential, step-by-step logic first, the human operator ensures the resulting agent behaves predictably. Once the functional spec is locked in, it can be passed to an execution environment - like Perplexity Computer - to generate the actual application.

Proprietary context drives application logic

The stark difference between a generic AI output and a highly valuable operational tool comes down to context injection. When agents rely solely on their baseline training data, they produce average workflows. When they are fed deep, proprietary context, they become specialised business assets.

In recent industry tests, developers fed a 20-page proprietary strategy document into an AI coding environment. Because the model had access to this deep, highly specific context, the resulting application was vastly superior to a zero-shot prompt.

Instead of just a basic search tool, the agent automatically generated a custom fit-score algorithm for evaluating partners, built a contact discovery module, and created a nuanced partnership proposal generator based entirely on the strategic framework provided in the document.

For operations leaders, the takeaway is clear - your proprietary data, internal documentation, and strategic playbooks are the most valuable assets you possess in the AI era. Feeding this data securely into an agent is what transforms it from a generic assistant into a customised operational engine. For a deeper look at how AI agent harnesses structure this context injection for enterprise automation, see how leading operations teams are building scalable agent architectures.