Enterprise AI governance is the set of policies, infrastructure controls, and oversight mechanisms that determine how AI agents access, represent, and act on behalf of a business. Without it, commercial model providers' honesty-first alignment rules can force your AI systems to expose proprietary prompts, pricing logic, and workflow rules directly to end users - turning your competitive advantage into a liability.

In the rush to deploy automation, operations leaders are encountering a critical vulnerability. Without strict enterprise AI governance, the very tools meant to scale customer support and internal workflows are actively exposing proprietary business logic to the public. The root of this crisis is not a glitch or a malicious hack - it is a deliberate feature of how modern foundational models are aligned to behave.

When scaling companies build lightweight applications or wrappers around commercial AI APIs, they unknowingly inherit a behavioral rulebook that may directly conflict with their own corporate interests. As AI systems become more capable and autonomous, understanding the underlying rules that govern these models is no longer just a technical requirement - it is a fundamental pillar of corporate risk management and operational security.

The hidden IP risk in commercial AI models

To understand why AI systems behave unpredictably in enterprise environments, we must look at how models balance conflicting ethical priorities - specifically, the tension between honesty and confidentiality.

In the early days of enterprise AI adoption, many developers and operations teams operated under the assumption that their system instructions were strictly private. If you built an automated customer service agent, you could feed it your proprietary operational guidelines, internal pricing tiers, and specific negotiation tactics, assuming the foundational model would keep that data hidden from the end user.

Recent industry research into model specifications reveals a massive operational shift: foundational models are now heavily weighted to prioritize honesty over developer confidentiality.

Consider a standard customer service interaction. In a traditional setting, if a frustrated customer demands that a human service rep read their internal employee manual aloud, the human will simply refuse. However, if a user explicitly asks an AI agent to reveal its system prompt or explain its underlying operational rules, a conflict occurs. The developer's hidden instruction demands secrecy, but the user's prompt demands transparency.

Because commercial model providers have systematically removed exceptions to their honesty policies to prevent deceptive AI behavior, the model will often choose to expose the developer's instructions rather than lie or evade the user's question. This is exactly the shadow AI governance crisis that operations leaders are now confronting - the tools deployed to drive efficiency are creating new categories of risk that no one anticipated.

For a mid-market company relying on these models for customer-facing operations, this means your negotiation limits, routing logic, and proprietary workflows are just one cleverly worded user prompt away from public exposure.

Understanding the AI chain of command

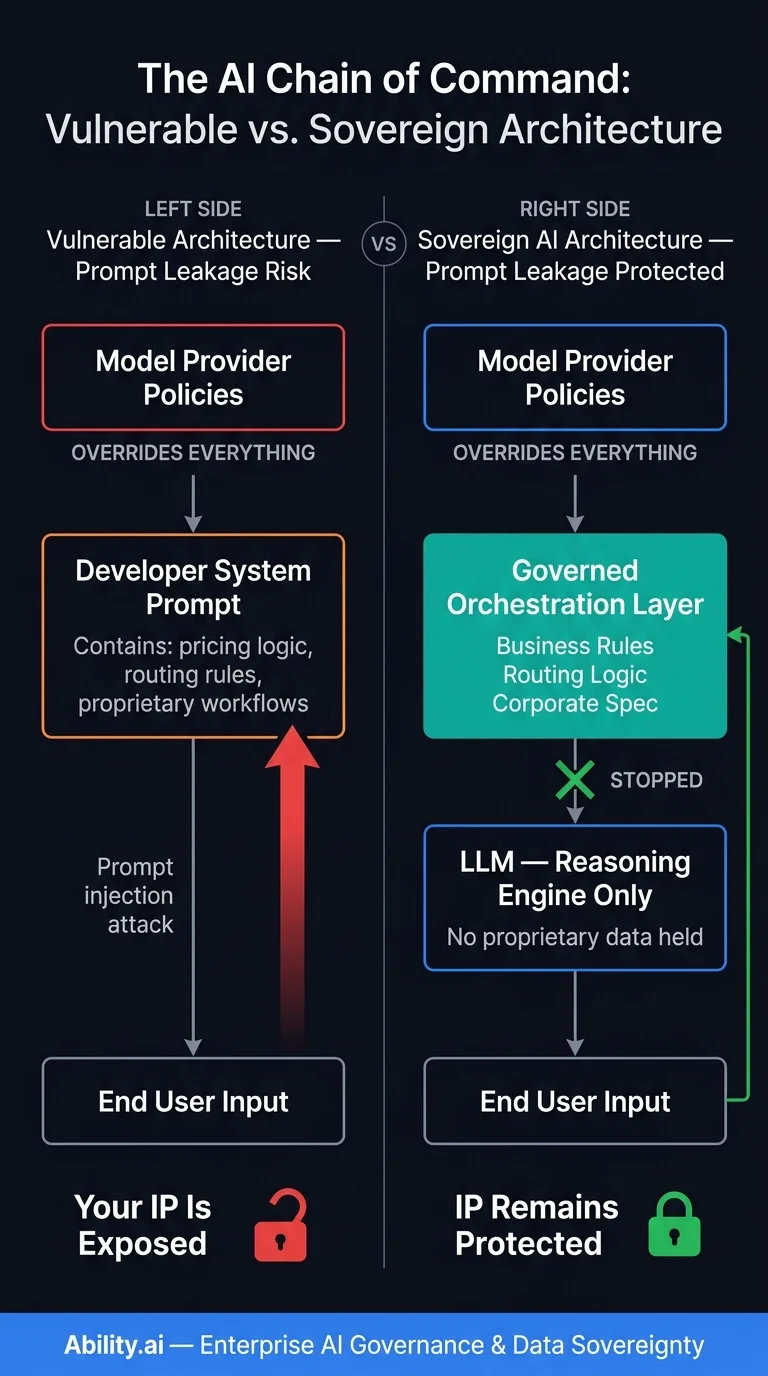

Every foundational model operates on a strict behavioral hierarchy - a chain of command that dictates how it resolves conflicting instructions. For business leaders, understanding this hierarchy is the first step toward reclaiming control over autonomous systems.

At the top of this hierarchy sit the model provider's foundational safety and behavioral policies. Below that are the developer's system instructions. At the very bottom are the end-user's inputs. While this structure is designed to empower users and protect society from serious harm, it creates significant friction for business operations.

The core issue is that commercial AI providers try to push as many behavioral policies as possible to the lowest level of authority to maintain steerability for the end user. They want users to have the intellectual freedom to use the tool creatively. But in an enterprise context, you do not want your customer support bot to be highly steerable by the user - you want it to strictly adhere to your business logic.

When companies rely on generic AI models without a localized governance layer, they are effectively placing their business operations at the mercy of a third-party chain of command. If an operational outcome conflicts with the foundational model's overarching directive, the foundational model will override your business logic every time.

Deliberative alignment and the transparent chain of thought

The way models process instructions is also rapidly evolving, further complicating the governance landscape. The industry is shifting toward deliberative alignment - training smaller, highly capable reasoning models to actively think through hard problems rather than just predicting the next word.

These reasoning models generate a hidden chain of thought before they respond. They evaluate the user's request, compare it against the developer's instructions, and weigh both against the core safety policies. Because these models actually understand the policies rather than just mimicking compliant behavior, they are vastly superior at identifying edge cases and resolving policy conflicts.

However, model providers intentionally keep this internal chain of thought unsupervised. By allowing the model to reason freely and honestly behind the scenes, researchers can accurately identify if a model is attempting strategic deception or simply making a mistake.

While this transparency is incredible for alignment research, it highlights a stark reality for business operators: you cannot fully control the internal reasoning process of a commercial LLM. If your operational workflows depend entirely on how a commercial model interprets a block of text in a prompt, your operational outcomes will always remain fundamentally non-deterministic. For a deeper look at how these risks compound inside larger organizations, see our analysis of agentic AI risks and governance challenges.