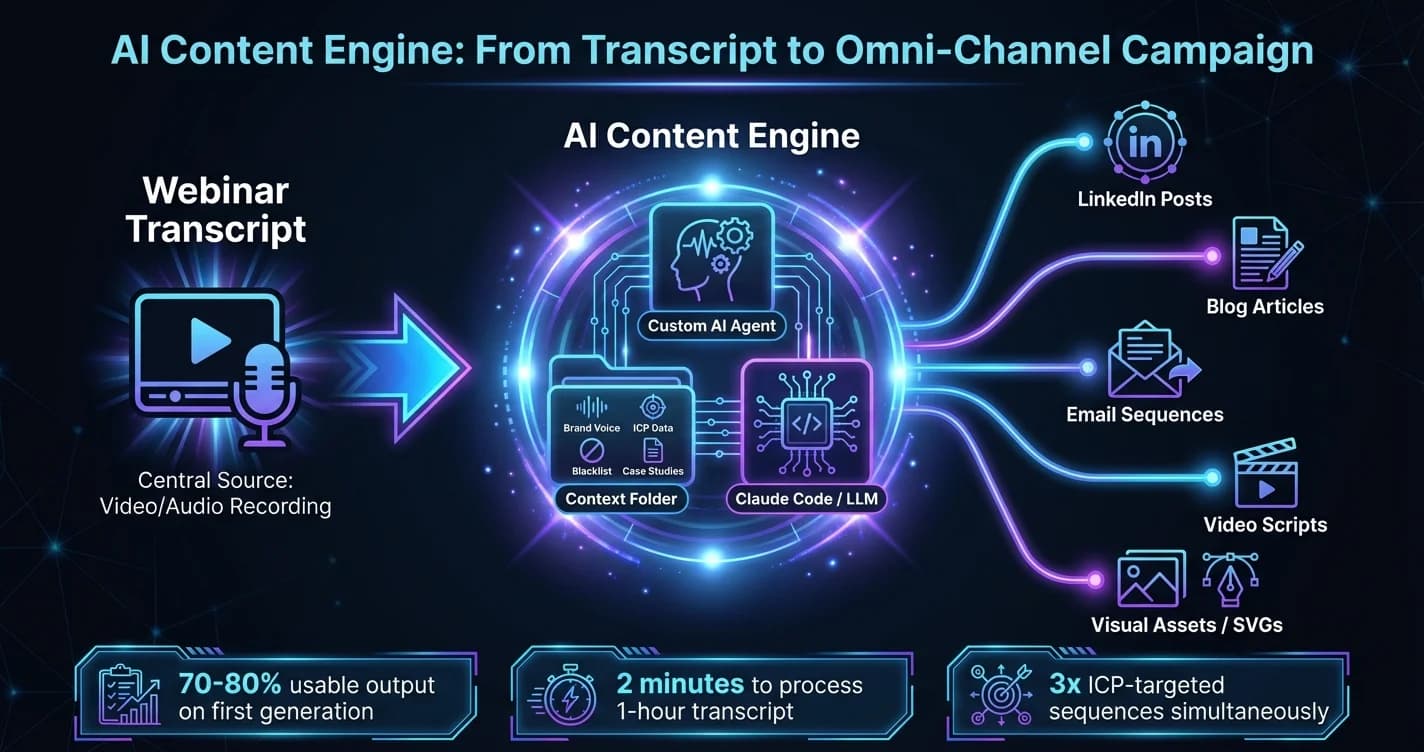

An AI content engine is a custom AI agent system that transforms a single source — such as a webinar transcript — into a complete omni-channel marketing campaign, generating social posts, blog articles, email sequences, and visual assets simultaneously. In 2026, mid-market companies deploying a governed AI content engine are reducing post-event content production from weeks to minutes — but the shadow AI risks introduced by local, ungoverned deployments are just as significant as the productivity gains.

The deployment of an AI content engine has become a critical differentiator for mid-market and scaling companies. As organizations attempt to scale their marketing operations without ballooning headcount, the ability to automatically transform a single piece of source material into an omni-channel marketing campaign is no longer just a competitive advantage — it is an operational necessity.

However, a fascinating shift is occurring in how these systems are built. The industry is rapidly moving away from fragile, multi-step automation platforms toward custom, locally deployed AI agents. While this shift unlocks incredible creative potential and efficiency, it simultaneously introduces complex challenges regarding data sovereignty, governance, and operational security.

Here is a comprehensive look at how modern AI content engines are being constructed, the operational workflows they replace, and the strategic governance steps leaders must take to deploy them securely.

The live event shift and the post-webinar ecosystem

To understand the value of a custom AI content engine, we must first look at the source material fueling it. Despite the rapid advancement of digital marketing, webinars and live remote events are experiencing a massive resurgence. As we move further into an AI-generated landscape, audiences are actively seeking the human experience of live interaction as a counterweight to automated interactions.

But the true operational value of a webinar is rarely the live attendance. The most significant return on investment comes from the post-event content ecosystem.

Traditionally, a company would host a webinar using platforms like Riverside or Zoom. After the event, a marketing team would spend weeks manually dissecting the recording to create follow-up materials. The modern AI content engine workflow condenses this timeline into minutes through a structured, three-step framework:

- Planning: Utilizing current Ideal Customer Profile (ICP) data and email lists to draft highly targeted topics.

- Promotion: Leveraging distribution networks to drive RSVPs, rather than relying solely on organic social media followers.

- Post-event extraction: Feeding the raw event transcript into a custom AI agent to generate a complete ecosystem of repurposed content.

It is in this third step — the AI content engine itself — where the operational mechanics have completely transformed.

The evolution from fragile automations to custom AI content engines

Until recently, the standard method for automating post-webinar content relied heavily on integration tools like Zapier or n8n. A typical workflow would trigger when a Riverside transcript was finalized, sending the text through a Zapier webhook to an AI model, which would then output generic social posts into a Google Doc or Notion workspace.

While functional, this approach is fundamentally flawed for scaling operations. Integrations are brittle. Workflows break silently, and teams often remain unaware that their automated sequences have been offline for days until the pipeline dries up.

Today, the most effective strategy replaces these fragile connections with a dedicated AI content engine built entirely within environments like Claude Code. Rather than relying on conditional "if/then" triggers, these custom agents act as intelligent engines. They execute multiple, specialized API calls simultaneously — one for LinkedIn posts, one for blog articles, one for email sequences — all guided by complex system prompts and deep contextual data.

This shift allows non-technical team members to build highly complex systems. By simply describing their ideal workflow in plain language, a user can generate a markdown file that dictates the agent's behavior, refine it through conversational prompting, and deploy a bespoke tool in a matter of hours. As we covered in autonomous marketing agents and the creative velocity advantage, this speed of deployment is itself becoming a competitive moat.

The context folder framework: eliminating generic AI slop

One of the most persistent complaints from operations and marketing leaders is that AI-generated content sounds robotic, generic, and off-brand. The telltale signs — starting a post with "Here's what actually works" or relying heavily on predictable sentence structures — instantly degrade brand trust.

Research reveals that the secret to bypassing this generic output is the "Context Folder" framework. When a custom AI content engine is built locally, it can be connected directly to a directory on a user's machine that houses dense, highly specific company data.

To achieve a 70 to 80 percent usable output on the first generation, the agent must be fed:

- Writing examples directly from the founder or CEO

- Detailed case studies and historical performance data

- Strict blog post and brand voice guidelines

- Specific ICP lists and target audience segmentations

- A "marketing blacklist" of banned industry buzzwords and overused phrases

By pulling from these context documents rather than relying solely on the foundational training of the LLM, the AI content engine produces highly nuanced content. When an agent processes a transcript, it cross-references the spoken words against the brand's exact tone, ensuring the resulting assets require minimal human editing.