The AI adoption gap is the widening divide between what AI can theoretically automate and what businesses are actually deploying in production. Despite modern foundation models crossing the intelligence threshold for most enterprise tasks, observed deployment sits at a fraction of that potential - because the bottleneck is workflow integration, not AI capability.

The AI adoption gap has become the defining operational challenge for mid-market and scaling enterprises this year. Recent data from Anthropic paints a stark picture of the current enterprise AI landscape, highlighting a massive disconnect between what artificial intelligence can theoretically accomplish and what businesses are actually deploying in production.

In this industry research analysis, we examine the widening delta between theoretical AI coverage and observed business integration. The data reveals a critical truth for operations leaders - the primary bottleneck to AI ROI is no longer a lack of raw artificial intelligence, but rather the immense friction of integrating these capabilities into existing operational workflows.

The data behind the AI adoption gap

The research visualizes this adoption gap through two distinct metrics across various industries: theoretical AI coverage (the percentage of industry tasks that models are capable of automating) and observed AI coverage (the actual percentage of work currently being automated by businesses in the real world).

The theoretical capabilities offer no major surprises to those following AI development. Modern foundation models exhibit exceptional proficiency in highly structured, logic-heavy disciplines. The data shows massive theoretical automation potential in coding, mathematics, finance, and engineering roles. Furthermore, these systems demonstrate remarkable capabilities in legal analysis and essentially all general office and administrative work.

However, the observed AI coverage - the reality of what businesses are actually doing - tells a completely different story. Across nearly every sector, the actual deployment of AI sits at a fraction of its theoretical potential. We are witnessing a scenario where the technology is mathematically and logically capable of automating complex tasks, yet operations teams remain stuck manually executing these exact workflows.

This gap represents a massive operational inefficiency and a missed opportunity for scaling businesses. It begs the critical question - if the models are smart enough to do the work, why isn't the work getting done?

The end of the foundation model race for enterprise

For the past two years, the enterprise narrative has been dominated by the foundation model race. Businesses have eagerly awaited the next release from OpenAI, Anthropic, or Google, assuming that smarter models would automatically translate into better business outcomes.

The current data effectively signals the end of this phase for the average enterprise AI user. For a mid-market company scaling between $5 million and $250 million in revenue, it is not going to matter this year if they gain access to a slightly more advanced model. The intelligence threshold required to automate standard business logic has already been crossed.

Operations leaders are experiencing severe model fatigue. A foundation model that scores 5% higher on a standardized legal reasoning benchmark provides zero material value to a Chief Operating Officer if that model cannot securely access the company's contract repository, apply the specific corporate legal playbook, and route the finalized document through the appropriate approval channels.

The raw cognitive power of AI is no longer the variable that dictates success. The intelligence is a commodity; the integration is the competitive advantage.

Why workflow integration is the true operational hurdle

The core reason the AI adoption gap exists is that foundation models do not integrate themselves into your business. They exist as isolated brains, accessible via chat interfaces or basic APIs, entirely disconnected from the nervous system of your company.

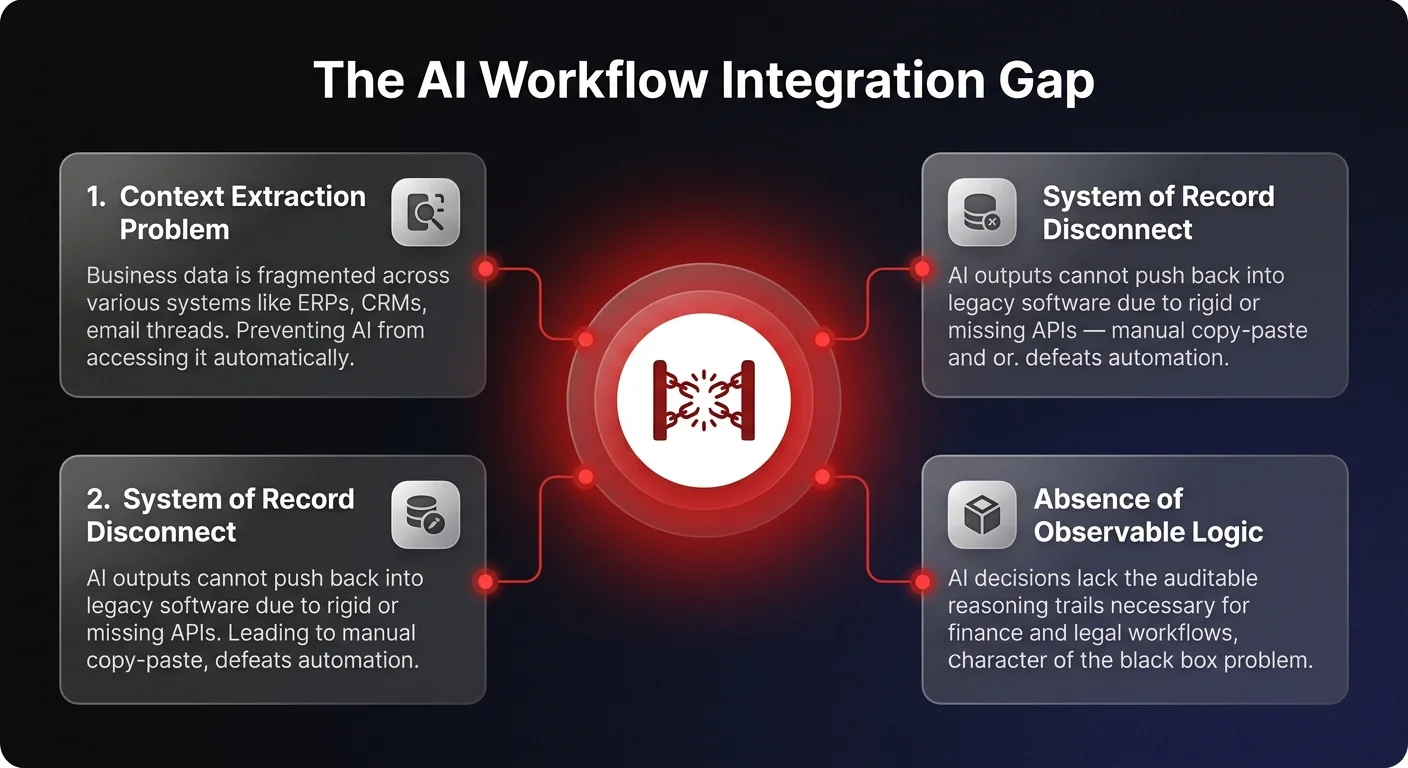

The really hard thing about AI is actually integrating it into your existing workflows. To move a task from theoretical coverage to observed coverage, businesses must overcome a gauntlet of integration challenges:

The context extraction problem

AI models need context to perform administrative, financial, or legal work. In a theoretical lab setting, all context is provided perfectly in the prompt. In reality, a company's context is fragmented across ERPs, CRMs, internal wikis, email threads, and Slack messages. Extracting this data securely and feeding it to an AI model in real-time is a massive engineering hurdle for standard IT teams.

The system of record disconnect

Once an AI model generates a useful output - such as reconciling an invoice, drafting a legal response, or categorizing a customer support ticket - that output must be pushed back into a system of record. Legacy software often features rigid APIs, fragile webhooks, or no programmatic access at all, forcing employees to manually copy and paste AI outputs, entirely defeating the purpose of automation.

The absence of observable logic

When an employee makes a decision, a manager can ask them to explain their reasoning. When a standard LLM makes a decision, it often acts as a black box. Operations leaders cannot deploy AI at scale into finance or legal workflows without observable logic - a clear, auditable trail of how the AI reached its conclusion, what data it referenced, and what rules it followed.